Compiled: 2026-02-28 09:49:34.6469412005 Emergence

posts/050-emergence.qmd

posts/050-emergence.qmd

5.1 Introduction

posts/050-files/introduction.qmd

This introduction lays out the conditional probability notation and concepts we need and then introduces two models, the P2P and DMC that we go on to consider in more detail.

5.1.1 Conditional Probability

We work in discrete time \(t=0,1,2\) on a probability space \((\Omega,\mathscr F,\mathsf P)\) with a filtration \[ \mathscr F_0\subset \mathscr F_1\subset \mathscr F_2=\mathscr F. \] Here \(\mathscr F_t\) represents the information available at time \(t\). Let \(X\) denote the ultimate loss of a policy (or portfolio), which is revealed by time \(2\). Thus \(X\) is \(\mathscr F_2\)-measurable. \(\mathscr F_0=\set{\emptyset, \Omega}\) contains no information about future losses.

The point of a multi-period model is simple: at time \(1\) the insurer knows more than at time \(0\), and the value assigned to \(X\) at time \(1\) depends on that new information, but further uncertainty remains. We represent the time-1 information by a state variable \(S\).

For simplicity, we assume that \(S\) has a finite range, \[ S\in \set{1,\dots,k}, \] and assume it encodes all information revealed at \(t=1\) \[ \mathscr F_1=\sigma(S). \] This assumption says that time-1 information is exactly the observed state. In particular, a random variable \(Y\) is \(\mathscr F_1\)-measurable, meaning its value is known at \(t=1\), if and only if there is a function \(y\) such that \(Y=y(S)\). In a finite-state model, \(\mathscr F_1\)-measurable objects are lookup tables indexed by the state. In our application, \(S\) might encode information about reported claims used to set case reserves, as well as macroeconomic and environmental information used in bulk reserving.

Actuaries usually meet conditioning as a number, \(\mathsf E[X\mid S=s]\). In a multi-period model, conditioning appears as a function of the realized state. We write conditional expectation as \[ \mathsf P_S(X):=\mathsf P(X\mid \mathscr F_1), \] meaning the best estimate (mean) of \(X\) given state \(S\). Since \(\mathscr F_1=\sigma(S)\), the random variable \(\mathsf P_S(X)\) is \(\mathscr F_1\)-measurable and therefore a function of \(S\). Write \[ \mathsf P_S(X)=r(S) \] which means \[ r(s):=\mathsf P(X\mid S=s). \] (As a random variable, \(r(S)(\omega)=r(S(\omega))\).) The function \(r\) is the best estimate reserve evaluated at time \(1\). The type of reserve depends on the definition of \(X\): it could be case if \(X\) pertains to a single claim or case plus IBNR if \(X\) is modeling reported and unreported claims on a portfolio. Once the state \(S\) is observed, the reserve is the number \(r(S)\). In a finite-state model, the entire conditional expectation \(\mathsf P_S(X)\) is nothing more than the \(k\) values \({r(1),\dots,r(k)}\) attached to the \(k\) states.

5.1.2 Disintegration or Decomposition of Probabilities

We assume a regular conditional probability exists so that conditioning corresponds to a two-stage decomposition or “disintegration” of \(\mathsf P\). The state \(S\) has a marginal law, denoted \(\mathsf P^S\), defined by \[ \mathsf P^S(A) = \mathsf P(S^{-1}(A)) = \mathsf P\set{\omega \middle S(\omega) = k} \] for \(A \subset \set{1,\dots,k}\). In addition, there is a conditional law given the state, denoted \(\mathsf P_S\). Informally, to arrive at at \(t=2\) outcome, we first draw \(S\sim \mathsf P^S\), and then, given \(S=s\), draw the remaining uncertainty according to \(\mathsf P_s\). This is the multi-period version of “state then development.”

With \(S\in\set{1,\dots,k}\), the law \(\mathsf P\) decomposes (disintegrates) into a mixture of the conditional laws \(\set{\mathsf P_s}_{s=1}^k\) with mixing weights given by the state probabilities \(\mathsf P^S(s)=\mathsf P\set{S=s}\). Concretely, for any event \(A\in\mathscr F\), \[ \mathsf P(A)=\sum_{s=1}^k \mathsf P^S(s)\,\mathsf P_s(A). \] This identity is the easiest way to remember what “conditioning on \(S\)” means: \(\mathsf P_s(\cdot)\) is the state-\(s\) world, and \(\mathsf P^S\) averages those worlds using the probability of each state.

Applying the same mixture idea to a random variable \(X\) gives \[ \mathsf P(X)=\sum_{s=1}^k \mathsf P^S(s)\,\mathsf P_s(X). \] Now define the time-1 best estimate reserve by \[ r(s):=\mathsf P_s(X),\qquad \mathsf P_S(X)=r(S). \] Then \[ \mathsf P(X)=\sum_{s=1}^k \mathsf P^S(s)\,r(s)=\mathsf P^S\big(r(S)\big). \] Thus the unconditional mean loss is the state-probability-weighted average of the statewise best estimate reserves.

Remark 5.1. \(\mathsf P_s(\cdot)\) is the regular conditional probability \(\mathsf P(\cdot\mid S=s)\), viewed as a probability measure indexed by the realized state (Kallenberg 2021).

Remark 5.2 (Disintegration and conditional probability). Integration builds a joint law from pieces. Start with a marginal law on states, \(\mathsf P^S\) on \({1,\dots,k}\), and a family of conditional laws \({\mathsf P_s}_{s=1}^k\) on \((\Omega,\mathcal F)\). These pieces define a probability measure \(\mathsf P\) on the product space \({1,\dots,k}\times\Omega\) by \[ \mathsf P({s}\times A):=\mathsf P^S(S=s),\mathsf P_s(A),\qquad A\in\mathcal F. \] Extending by additivity gives a joint law on the product. In this direction, the joint measure is a mixture of the kernels \(\mathsf P_s\).

Disintegration recovers the pieces from the joint law. Now, go the other way: start with a single probability measure \(\mathsf P\) on \({1,\dots,k}\times\Omega\) and define the state variable \(S(s,\omega)=s\). Then \(\mathsf P\) determines a marginal law \(\mathsf P^S\) for \(S\), and, for each \(s\) with \(\mathsf P^S(S=s)>0\), a conditional law \(\mathsf P_s\) on \((\Omega,\mathcal F)\) such that \[ \mathsf P({s}\times A)=\mathsf P^S(S=s),\mathsf P_s(A). \] The family \(s\mapsto \mathsf P_s\) is exactly a regular conditional probability for the second coordinate given \(S=s\).

In this finite-state setting, disintegration solves for the conditional laws: \[ \mathsf P_s(A)=\mathsf P(A\mid S=s). \] The same idea works in general state spaces, but existence and uniqueness become technical, which is why we state it as an assumption when needed.

5.1.3 From Single-Period to Multi-Period Pricing

The actuarial value of cash flows take expectations using \(\mathsf P\) and so multi-period bookkeeping collapses to a mixture across states. The tower property of conditional expectations means there is no difference between single- and multi-period expectations. Pricing replaces \(\mathsf P(\cdot)\) with a one-period pricing functional \(g(\cdot)\) and asks a basic question: If we can price one-period uncertainty, how can we price a multi-period liability?

There are many answers in the literature, and we focus on two: broadly an accident year ultimate view, and a calendar year steady-state view. They agree under \(\mathsf P\), but typically differ under \(g\).

We keep same notation: the \(t=0,1,2\) timeline and write \(S\) for the time-1 state with \(\mathscr F_1=\sigma(S)\). Think of \(S\) as the end-of-calendar-year information used to set reserves, and think of \(X\) as the ultimate loss. For simplicity we assume no discount. Ch REF1 treated discount without emergence and Ch REF2 combines discount and emergence to create the full picture.

The first construction is called P2P or policy to buy a policy pricing, also known as iterative pricing in the literature. It prices in two stages, mirroring the decomposition of \(\mathsf P\) as emerged state and then development. At time \(1\), after observing \(S\), price the remaining one-period uncertainty in \(X\) under the conditional model. This produces a time-1 value \[ V_1 := g^{\mathsf P_S}(X), \] a state-contingent quantity, and hence a function of \(S\).

Then, from time \(0\), price that time-1 random value across states: \[ V_0^{\mathrm{P2P}} := g^{\mathsf P^S}(V_1)=g^{\mathsf P^S}\!\big(g^{\mathsf P_S}(X)\big). \]

The time-1 step, \(g^{\mathsf P_S}(X)\), is an IFRS-style “best estimate plus risk adjustment” conditional on the information available at \(t=1\). The time-0 step prices the randomness of that risk-adjusted reserve across states. This is the source of “risk load on risk load.” Even if \(g\) is calibrated so that \(g^{\mathsf P_S}(X)\) adds a risk adjustment to the conditional best estimate in each state, the second application of \(g\) adds a further adjustment for variation in that reserve across states. We assume that \(g\) is constant, so there is no market cycle shift. Allow \(g\) to evolve over time is an obvious idea, but a massive increase in complexity and is left for future research.

P2P a natural construction when the economic story is genuinely sequential: the contract is repriced, or capital is rolled forward, as information arrives. It is also a natural construction if one wants a time-consistent valuation rule, in the sense that values are built by composing one-period valuations along the filtration.

The second construction, DMC or decoupled marginal cost, takes a different view. Instead of iterating prices along a single accident year, it builds a one-period portfolio that represents a steady-state calendar year of development and prices its one-period risk using \(g\).

The calendar-year viewpoint is familiar to actuaries: in calendar year \(t=0\to1\) the firm experiences one year of development on many accident years. DMC formalizes that idea by forming a one-calendar-period development slice from each accident year, pooling them into a single one-year aggregate, pricing that aggregate with \(g\), and then allocating the priced margin back to the accident years using the natural allocation. It is called decoupled because prior accident years are more mutually independent that sequential development from the same accident year. The base DMC model assumes prior accident years are all independent, a standard modeling choice (Mack 1993).

DMC has a conceptual and practical simplicity. \(g\) is used once, on a one-period calendar-year aggregate. We are only projecting forward one-year, regardless of the number of historical accident years. The resulting risk adjustment is naturally interpreted as a margin recognized over the calendar year. Any remaining margin that is not recognized immediately is deferred by construction, rather than arising from a second application of \(g\).

This makes DMC a natural construction when the operational unit is the calendar year, and when the accounting story earns one year of risk adjustment as one year of development occurs. It ties well with IFRS 17 requirements.

To conclude the introduction:

- P2P is accident-year native. It follows a single policy (or accident year cohort) as information arrives and revalues the remaining liability at each step.

- DMC is calendar-year native. It prices the risk of the next calendar year’s development, as a pooled one-period problem, and then attributes the result back to accident years.

Under \(\mathsf P\), these perspectives are just alternative descriptions of the same expectation. Under \(g\), they generally lead to different values because the mixture across states that makes conditioning easy under \(\mathsf P\) does not commute with nonlinear pricing. The next sections make that difference concrete. We start by introducing an example and then analyzing its P2P and DMC pricing.

5.2 Two Pricing Models for Bernoulli Risks with Simple Information

posts/050-files/two-pricing-models.qmd

Multi-period pricing looks deceptively close to single-period pricing: a loss either happens or it does not, and a one-period pricing rule assigns a premium. The complication is time and information. Real liabilities do not arrive fully formed. Estimates, reports, and model updates arrive in stages, and those stages change the best estimate of the remaining liability. Accounting rules mean adverse development cannot be wished away, and recognizing it has an economic cost.

This section studies a deliberately stylized framework for a two-period emergence with a Bernoulli risk driven by a latent uniform variable. If we can’t solve the problem in this simple world, we’ll never succeed in the real world! We then present two models: P2P and DMC. Both use SRMs to price risk. The P2P model prices a multi-period risk by composing the same one-period pricing rule across time. It can be interpreted as the price for a replicating portfolio consisting of a “policy to buy a policy”, hence the name. The DMC decoupled marginal cost model sets up a calendar year portfolio with a new accident year and a generic prior year’s reserves, and prices it from the top-down using the SRM.

Both models are simple enough to analyze by hand, but still capture two features that matter in practice.

- Information about the latent variable arrives at an interim date and changes the conditional distribution of what remains.

- The pricing rules react to that interim update in a way that depends on risk appetite, as encoded by the underlying SRM.

We see pricing depends both on the SRM and the way information emerges. The key findings are that the “best” interim information depends on risk appetite as encoded in the pricing rule, and that not all appetites value interim information in the same way. Moreover, P2P and DMC may differ in their view of the economic cost of multi-period emergence. In this model, “best” means the interim disclosure that minimizes the two-period price. We analyze when the two-period price is uniformly lower or higher than the single-period price. For P2P we are led to consider whether the SRM is sub- or super-multiplicative, as described in Section 4.11.

These considerations matter. Insurance lines differ in emergence patterns. Property catastrophe risk tends to emerge quickly; casualty and liability risk often emerges slowly, with information arriving over extended periods. This section illustrates how a pricing rules value interim information, and why that valuation differs between risk appetites.

The next subsections describe the framework components.

5.2.1 Discount

We assume the discount rate is zero. The model we are describing reflects loss emergence, not loss discounting. Discount is relatively easy to incorporate once emergence has been addressed. This chapter parallels Chapter 3, which considered discount without modeling emergence.

5.2.2 The Risk

We price a Bernoulli random variable defined as follows. Let \(U\) be uniform on \([0,1]\). Fix a loss probability \(s\in(0,1)\) and define the Bernoulli loss as the indicator function \[ X=\set{U<s}. \] Thus \(X\) takes values in \(\{0,1\}\) with \(\mathsf P(X=1)=s\).

5.2.3 The One-Period Pricing Rule

Fix SRM defined by a concave distortion function \(g\). In one period, the price of the Bernoulli-\(s\) loss is \[ g(X) = g(s). \] We use \(g\) as notation for both the distortion and the induced one-period pricing rule on Bernoulli indicators.

5.2.4 Interim Information

Information flow is extremely complicated. The framework we introduce here restricts information to what we call simple information: a single yes/no message. At time \(t=1\), we receive a message determined by a threshold \(\omega_I\in(0,1)\):

- message A: \(U\le \omega_I\),

- message B: \(U> \omega_I\).

At this point, we avoid \(\sigma\)-algebras and think only in terms of the revealed message. The message partitions the world into two states, and in each state the remaining liability \(X\) has a conditional distribution. We write “\(X\) given the message” as \(X_I\).

5.2.5 The Meaning of “\(X\) Given the Message”

Because \(X=\set{U<s}\) is a function of \(U\), conditioning on the message just means restricting \(U\) to the relevant interval and re-evaluating the chances \(U<s\). There are three cases.

Case 1: \(\omega_I<s\).

If message A occurs (\(U\le\omega_I\)), then \(U<s\) holds for sure, so \[ X_I = X \mid (U\le\omega_I) = 1 \quad\text{(certain loss)}. \]

If message B occurs (\(U>\omega_I\)), then \(U\) is uniform on \((\omega_I,1]\). The event \(\set{U<s}\) becomes a Bernoulli event with reduced probability \[ s_I=\mathsf P(U<s\mid U>\omega_I)=\frac{s-\omega_I}{1-\omega_I}, \] so we can represent the conditional loss as \[ X_I = X \mid (U>\omega_I)\ \sim\ \text{Bernoulli}(s_I). \]

Case 2: \(\omega_I>s\).

If message B occurs (\(U>\omega_I\)), then \(U<s\) is impossible, so \[ X_I = X \mid (U>\omega_I) = 0 \quad\text{(certain no-loss)}. \]

If message A occurs (\(U\le\omega_I\)), then \(U\) is uniform on \([0,\omega]\). The event \(\set{U<s}\) becomes a Bernoulli event with increased probability \[ s_I = \mathsf P(U<s\mid U\le\omega_I)=\frac{s}{\omega_I}, \] so \[ X_I = X \mid (U\le\omega_I)\ \sim\ \text{Bernoulli}(s_I). \]

Case 3: \(\omega_I=s\).

- The message fully reveals the outcome: on \(U\le s\) we have \(X=1\), and on \(U>s\) we have \(X=0\).

Cases 1 and 2 reduce interim disclosure to a clean trade-off.

- In Case 1 we learn either that a loss is certain, or that the remaining loss is less likely.

- In case 2 we learn either that no loss is certain, or that the remaining loss is more likely.

Different risk appetites can value these trade-offs differently. Some place a high premium on ruling out loss, others are more sensitive to confirming loss early, and still others react primarily to how the conditional loss probability shifts. This simple dichotomy foreshadows the results that follow.

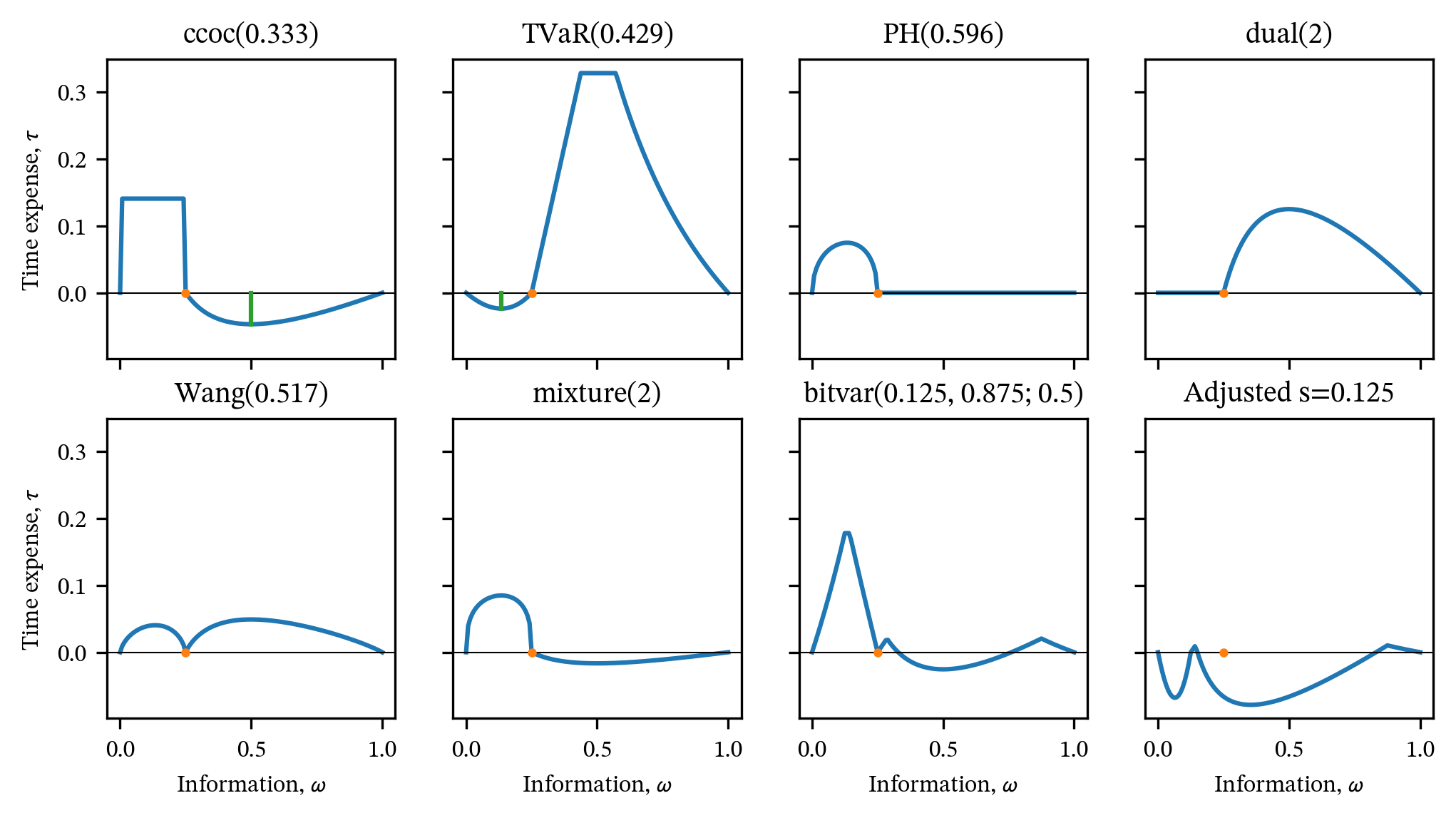

5.2.6 Time expense

Define the time expense of the information choice \(\omega_I\) as the difference between a multi-year pricing functional \(g_I\) depending on information \(I\) and one-year price: \[ \tau(\omega_I)=g_I(X) - g(X). \] A positive value indicates information is time expensive and a negative value that it is time cheap. For a given \(g\), we want to know if and when there is a choice of information making pricing time cheap or expensive for all Bernoulli risks.

5.2.7 Simple BTE

Definition 5.1 A distortion \(g\) is simple Bernoulli time expensive (simple BTE) if \(g_I(X)\ge g(X)\) for every Bernoulli \(X=\set{U<s}\), \(s\in(0,1)\), and every choice of simple information \(\omega_I\in(0,1)\). It is simple Bernoulli time cheap (simple BTC) if there exists some choice of simple information so that \(g_I(X)\le g(X)\).

The distortion \(g\) is simple BTC if there is a way to select information \(\omega_I\) so that the \(g_I\) price is less than the one-year price. It is called simple BTC because we are restricting the information to just revealing \(U>\omega_I\) or \(\le \omega_I\). Using this terminology, we can describe the link between time expense and properties of the risk appetite encoded by \(g\).

5.3 The Policy to Buy a Policy (P2P) Model

posts/050-files/p2p-analysis.qmd

5.3.1 Definition

P2P prices over two periods by iterating the same one-period rule. Continue the framework and notation from Section 5.2.

Step 1. Once the message is known at \(t=1\), the remaining liability from \(t=1\) to \(t=2\) is a one-period Bernoulli (or a constant) in that state. Its time 1 price is therefore:

- in a state where \(X\) is certainly \(1\), the time 1 price is \(1\),

- in a state where \(X\) is certainly \(0\), the time 1 price is \(0\),

- in a state where \(X\) is Bernoulli with adjusted probability \(s_I\), the time 1 price is \(g(s_I)\).

This produces a \(t=1\) value that depends on which message arrived. Call that \(t=1\) random value \(V_1=V_1(\omega)\).

Step 2. At \(t=0\), before the message is known, we price the random value \(V_1\) using the same distortion \(g\).

This yields the two-period P2P price \(g_I(X)\).

For the Bernoulli risk \(X=\set{U<s}\) and information threshold \(\omega_I\in(0,1)\), the P2P price becomes \[ g_I(X)= \begin{cases} g\left(\displaystyle\frac{s-\omega_I}{1-\omega_I} \right)\bigl(1-g(\omega_I)\bigr) + g(\omega_I), & \omega_I < s, \\[1em] g\left(\displaystyle\frac{s}{\omega_I} \right)g(\omega_I), & \omega_I > s. \end{cases} \] This is just “price the time 1 state-values using the time 0 state-prices”. Why? In Case 1 \(V_1\) takes value \(1\) and \(v:=g(s-\omega_I) / g(1-\omega_I))\) with probabilities \(\omega_I\) and \(1-\omega_I\). Since \(1>v\) the SRM prices the first state at \(g(\omega_I)\) and hence the second at \(1-g(\omega_I)\). In Case 2, the outcomes values are \(0\) and \(g(s/\omega_I)\). Now, the second term is larger and picks up weight \(g(1-\omega_I)\) (see REF to how SRMs are computed).

Next, we apply the stochastic framework and one-period pricing rule to explore properties of the P2P price. The ?tbl-states lays out the two states revealed by knowing \(\omega\in(0,\omega_I]\) or \(\omega\in(\omega_I, 1]\). It shows

- the state (conditional) mean,

- the state value \(g(X\mid I)\), which corresponds to the market value of the remaining liability given the interim information,

- the state probability (\(\omega_I\) or \(1-\omega_I\)), the objective probability of the state, and

- the state price as determined by \(g\), the market value price of the state.

For the state price, in case 1 the certain loss is the higher value state and for case 2 it is the possible loss. The lower value state has price given by the complement.

| State | State Mean | State Value | State Probability | State Price |

|---|---|---|---|---|

| Case 1: \(\omega_I < s\) | ||||

| \([0, \omega_I)\) | \(1\) | \(1\) | \(\omega_I\) | \(g(\omega_I)\) |

| \([\omega_I,1]\) | \(s_I=(s-\omega_I)/(1-\omega_I)\) | \(g(s_I)\) | \(1-\omega_I\) | \(1-g(\omega_I)\) |

| Case 2: \(\omega_I > s\) | ||||

| \([0, \omega_I)\) | \(s_I=s/\omega_I\) | \(g(s_I)\) | \(\omega_I\) | \(g(\omega_I)\) |

| \([\omega_I,1]\) | \(0\) | \(0\) | \(1-\omega_I\) | \(1-g(\omega_I)\) |

The first state includes a risk load in both cases, but the state value only includes a risk load in case 2. In both cases \(g_I(X)\) is given by the expectation of the state value with respect to the state price (risk-adjusted) probabilities. The values are \[ g_I(X)= \begin{cases} g\left(\displaystyle\frac{s-\omega_I}{1-\omega_I} \right)(1-g(\omega_I)) + g(\omega_I) & \omega_I < s \\[1em] g\left(\displaystyle\frac{s}{\omega_I} \right)g(\omega_I) & \omega_I > s \\ g(s) & \omega_I = s. \\ \end{cases} \]

5.3.2 Simple time expensive

Next we analyze if and when P2P is time expensive or time cheap. In Case 2, \(\omega_I\ge s\), time expense is controlled by a multiplicative inequality for \(g\) comparing \(g(s)\) to \(g(s/\omega_I)g(\omega_I)\). Case 1 is a little trickier and is best understood using the dual of \(g\). The time expense is can be written \[ \begin{aligned} \tau(\omega_I) &= g\left(\frac{s-\omega_I}{1-\omega_I} \right)(1-g(\omega_I)) + g(\omega_I) - g(s) \\ &= \left(1-\check g\left(1-\frac{s-\omega_I}{1-\omega_I} \right)\right)(1-(1-\check g(1-\omega_I)) + 1 - \check g(1-\omega_I) -(1-\check g(1-s)) \\ &= \left(1-\check g\left(1-\frac{s-\omega_I}{1-\omega_I} \right)\right)\check g(1-\omega_I) - \check g(1-\omega_I) + \check g(1-s) \\ &= -\check g\left(\frac{1-s}{1-\omega_I} \right)\check g(1-\omega_I) + \check g(1-s). \end{aligned} \] This form exactly mirrors \(g(s/\omega)g(\omega)\) and shows how we get a super-multiplicative condition for positive time expense. These calculations motivate the introduction of SBM and SPM in Section 4.11.1. Section 4.11.2 gives conditions for when SBM and SPM fail to hold, which can translate into time cheap or expensive behavior.

5.3.3 A necessary and sufficient condition for Simple BTW

Proposition 5.1 Given a concave distortion \(g\), P2P pricing is simple BTE if and only if \(g\) is sub-multiplicative and \(\check g\) is super-multiplicative.

Proof. By definition, \(g\) is simple BTE if and only if \(g_I(X)\ge g(X)\) for all \(\omega_I\). Start with the (easier) Case 2, where we require \[ g_I(X) = g\left(\frac{s}{\omega_I} \right)g(\omega_I) \ge g(s) \] for all \(\omega_I \ge s\). This inequality holds precisely when \(g\) is sub-multiplicative, taking \(u=s/\omega_I\) and \(v=\omega_I\). If \(g\) were not sub-multiplicative, we could construct a counter-example to simple BTE.

By the calculation in Section 5.3.2, simple BTE requires in Case 1 required \[ \check g(1-s) \ge \check g\left(\frac{1-s}{1-\omega_I} \right)\check g(1-\omega_I), \] i.e., that \(\check g\) is a SPM function.

5.3.4 Examples

Example 5.1 (Constant Cost of Capital (CCoC) Distortion) The CCoC \(g(s)=d+vs\), \(d,v\ge 0\), \(d+v=1\), is SPM but not SBM. The proof of Proposition 5.3, \(g\) should be time cheap for some information in Case 2, \(\omega_I>s\).

The CCoC distortion has a minimum rate-on-line, since \(g(s)\ge d\) for all \(s>0\). No matter how small the risk \(s\), the premium is never lower than \(d\), making \(g\) especially expensive for low-chance loss, but, on the flip side, making it relatively cheap to add to a small risk. The elasticity, \(\eta(0+)\to 0\). This suggests that it is optimal to request interim information that rules out loss for sure and allows a higher chance of loss in the adverse state, i.e., Case 2 \(\omega_I>s\). Further, the marginal increase in premium with \(s\) equals \(v<1\), so it will be more economical to insure \(s_I\). These savings are offset by the risk margin applied in the second period. We now confirm these intuitions to determine the optimal \(\omega_I\).

In the calculation we use \(vd=v(1-v)=d(1-d)\).

In case 1, we work with the dual \(\check g(s)=1-g(1-s)=1-(d + v(1-s))=vs\) for \(s<1\) and \(\check g(1)=1\), \[ \begin{aligned} \tau(\omega_I) &= -\check g\left(\frac{1-s}{1-\omega_I} \right)\check g(1-\omega_I) + \check g(1-s) \\ &= -v^2\frac{1-s}{1-\omega_I}(1-\omega_I) + v(1-s) \\ &= dv(1-s) \\ &> 0 \end{aligned} \] for all \(\omega_I < s\). Thus, there is no possibility of a time savings from Case 1.

In case 2, \[ \begin{aligned} \tau(\omega_I) &= g\left(\displaystyle\frac{s}{\omega_I} \right)g(\omega_I) - g(s) \\ &= \left(d + v\frac{s}{\omega_I} \right)(d + v\omega_I) - (d + vs) \\ &= -d(1-d) + dv\left(\frac{s}{\omega_I} + \omega_I \right) - sv(1-v) \\ &= -dv\left[ 1 - \left(\frac{s}{\omega_I} + \omega_I \right) + s \right] \\ &= -dv\left(1 - \frac{s}{\omega_I}\right)(1-\omega_I) \\ &= dv\left(\frac{s-\omega_I}{\omega_I}\right)(1-\omega_I) \\ &<0 \end{aligned} \] because \(\omega_I > s\).

The optimal choice for \(\omega_I\) minimizes \(f(\omega)=(s/\omega-1)(1-\omega)=\omega + s/\omega - 1 -s\) which occurs when \(f'(\omega) = 1 - s/\omega^2=0\), \(\omega=\sqrt{s}\). Since \(f''(\omega)=2s/\omega^3 >0\) this is a minimum. Since \(\sqrt{s}>s\) the solution is indeed in case 2. The resulting time expense equals \[ \tau(\sqrt{s}) = dv\left(\frac{s-\sqrt{s}}{\sqrt{s}}\right)(1-\sqrt{s}) = -dv(1-\sqrt{s})^2 < 0. \] The minimizing value does not depend on \(d=1-v\). These calculations confirm the intuitions from the first paragraph.

Example 5.2 (Tail value at risk (TVaR)) Next, we consider TVaR. To be consistent with the CCoC example, select the parameter \(p\) to equate prices. When \(s<1\), \(d+vs<1\) and hence \(s<p\) giving the equal price \(p\) \[ \mathsf{TVaR}_p(X)= \frac{s}{1-p} = d + vs \iff p = \frac{d(1-s)}{d+vs}. \]

In many ways, the TVaR distortion is opposite to CCoC: it is tail-risk neutral (Jouini) but very expensive for more likely risks. Thus, we expect maximum time savings by pushing risk into the (cheap) tail creating an outcome with a certain loss and reaping a benefit from a lower expected loss random component. Marginal losses are charged at a rate \(1/(1-p) > 1\) compared to the discounted rate \(v<1\) for CCoC. The algebra confirms these intuitions. It is convenient to write \(k=1/(1-p)=(d+vs)/s\).

In case 1, \(\omega_I < s\), it is easiest to find the minimum price \[ \begin{aligned} g_I(X) &= g(\omega) + g\left( \frac{s-\omega}{1-\omega} \right)(1 - g(\omega)) \\ &= k\omega + k\left(\frac{s-\omega}{1-\omega} \right)(1 - k\omega) \\ &= k\ \frac{s - ks\omega + (k-1)\omega^2}{1-\omega}, \end{aligned} \] since the adjusted frequency \(\bar s<s<1-p\) by construction. Thus all terms are in the sloping part of the TVaR function. A little calculus yields the optimal value \(\omega = 1-\sqrt{1-s}\), which again only depends on the risk \(X\) and not the TVaR parameter.

In case 2, there can be no time savings because the TVaR function is sub-multiplicative (proved in XXXX).

5.3.5 P2P Over Three Periods

This section investigates P2P over three periods. It is not used elsewhere and can be skipped.

First, we need to define the information flow. It is easiest to use a nested flow, using two thresholds on the same latent \(U\). Choose thresholds \(0<\omega_1<\omega_2<1\) and define

- time 1 information: \(G_1=\sigma({U<\omega_1})\)

- time 2 information: \(G_2=\sigma({U<\omega_1},{U<\omega_2})\)

Then \(G_1\subset G_2\) and by time 2 the state space has exactly three atoms: \[ I_1=[0,\omega_1),\quad I_2=[\omega_1,\omega_2),\quad I_3=[\omega_2,1]. \]

DEVELOP!

5.4 Pricing recursion (P2P over three periods)

For Bernoulli, the one-step distortion price is \[ g(\mathrm{Ber}(p))=g(p). \]

At time 2, in each atom \(I_i\), the conditional loss probability is \[ p_i:=P(X=1\mid U\in I_i)=\frac{\lambda(I_i\cap[0,s])}{\lambda(I_i)}. \] So the time-2 booked value (premium/reserve for the final period) is \[ V_2=g(p(U))\in{v_1,v_2,v_3},\quad v_i:=g(p_i). \]

At time 1, the booked value is the distortion price of \(V_2\) conditional on \(G_1\): \[ V_1:=g(V_2\mid G_1). \]

At time 0, the three-period P2P premium is \[ \Pi_{3\mathrm{P2P}}:=g(V_1). \]

Everything reduces to explicit two-point distortion prices, because conditional on \(G_1\) you either land deterministically in \(I_1\), or you are in the mixture of \(I_2/I_3\).

Compute the pieces

Step 1: the \(p_i\) values

There are only three regimes (not \(3\times 3\)) once you impose \(\omega_1<\omega_2\).

\(s\le\omega_1\): \[ p_1=s/\omega_1,\quad p_2=0,\quad p_3=0. \]

\(\omega_1<s\le\omega_2\): \[ p_1=1,\quad p_2=\frac{s-\omega_1}{\omega_2-\omega_1},\quad p_3=0. \]

\(\omega_2<s\): \[ p_1=1,\quad p_2=1,\quad p_3=\frac{s-\omega_2}{1-\omega_2}. \]

Then \(v_i=g(p_i)\).

Step 2: time 1 value \(V_1\)

On \({U<\omega_1}\) you are in \(I_1\) for sure at time 2, so \[ V_1=v_1\quad\text{on }{U<\omega_1}. \]

On \({U\ge\omega_1}\), at time 2 you are in \(I_2\) with probability \[ \alpha:=P(U\in I_2\mid U\ge\omega_1)=\frac{\omega_2-\omega_1}{1-\omega_1}, \] and in \(I_3\) with probability \[ \beta:=P(U\in I_3\mid U\ge\omega_1)=\frac{1-\omega_2}{1-\omega_1}. \] So \(V_2\) conditional on \(U\ge\omega_1\) is two-point: it equals \(v_2\) w.p. \(\alpha\) and \(v_3\) w.p. \(\beta\).

For a two-point variable taking values \(a<b\) with \(P(b)=q\), the distortion price is \[ g=a+(b-a)g(q). \] Therefore, writing \(m:=\min(v_2,v_3)\), \(M:=\max(v_2,v_3)\), \[ V_1=w:=m+(M-m),g(q), \] where \(q\) is the probability of the larger of \({v_2,v_3}\) under \(U\ge\omega_1\). In the common ordering \(v_2\le v_3\) (true if \(p_2\le p_3\)), this is simply \[ w=v_2+(v_3-v_2),g(\beta). \]

So \(V_1\) itself is two-point: \[ V_1=\begin{cases} v_1,&\text{w.p. }\omega_1, \\ w,&\text{w.p. }1-\omega_1. \end{cases} \]

Step 3: time 0 value \(\Pi_{3\mathrm{P2P}}=g(V_1)\)

Again two-point. Let \(m_1:=\min(v_1,w)\), \(M_1:=\max(v_1,w)\), and let \(q_1\) be the probability of the larger of \({v_1,w}\). Then \[ \Pi_{3\mathrm{P2P}}=m_1+(M_1-m_1)g(q_1), \] where \(q_1\) is either \(\omega_1\) or \(1-\omega_1\) depending on whether the larger value is on \({U<\omega_1}\) or its complement.

That is the full closed form.

What to compare it to, and what drives the inequality

The one-year premium is \(g(s)\).

So the question “\(\Pi_{3\mathrm{P2P}}\gtreqless g(s)\)” becomes a question about how the two nested applications of “two-point distortion pricing” compare to the single application \(g(s)\).

The structural drivers are:

curvature of \(g\) (or of \(h=g-\mathrm{id}\)), because \(w\) is already a Jensen-type transformation: \[ w=v_2+(v_3-v_2)g(\beta) \] which you can view as applying \(g\) to a Bernoulli mixing of the time-2 states.

multiplicative-type behavior enters through the conditional probabilities \[ \beta=\frac{1-\omega_2}{1-\omega_1} \] and through the piecewise formulas for \(p_2,p_3\) (ratios of interval lengths). In the 2-period P2P, the key object was \(g(\omega s)\) vs \(g(\omega)g(s)\) etc. Here you get the same kind of objects, but nested: first at time 2 (inside \(v_i=g(p_i)\)), then again at time 1 (inside \(g(\beta)\)), then again at time 0 (inside \(g(q_1)\)).

recap

Let \(U\sim\mathrm{Unif}(0,1)\), \(X=1_{{U<s}}\) (paid at time 3), and use nested thresholds \[ 0<\omega_1<\omega_2<1,\quad I_1=[0,\omega_1),\ I_2=[\omega_1,\omega_2),\ I_3=[\omega_2,1]. \] Time 1 information is \(G_1=\sigma({U<\omega_1})\), time 2 information is \(G_2=\sigma({U<\omega_1},{U<\omega_2})\).

Write the one-period (time-0) distortion premium for \(\mathrm{Ber}(p)\) as \(g(p)\), and the survival dual as \[ \check g(u)=1-g(1-u). \]

The 3-period P2P recursion is

- time 2 booked value: \(V_2=g(P(X=1\mid G_2))\) (three-point),

- time 1 booked value: \(V_1=g(V_2\mid G_1)\) (two-point),

- time 0 premium: \(\Pi_3=g(V_1)\) (scalar).

Because \(V_1\) is always two-point, \(\Pi_3\) always reduces to “two-point distortion pricing” at the last step.

Below are the closed forms, then the answers to your two questions (always above vs existence of lower, and the minimizer).

Closed forms by regime

There are three regimes (relative to \(s\)), not \(3\times 3\).

Regime A: \(s\le\omega_1\)

Only \(I_1\) intersects \([0,s]\), so \(P(X=1\mid I_1)=s/\omega_1\), and \(P(X=1\mid I_2)=P(X=1\mid I_3)=0\).

Then \(V_2\) is \(g(s/\omega_1)\) on \(I_1\) and \(0\) otherwise, and the recursion collapses to a pure product: \[ \Pi_3(\omega_1,\omega_2)=g(\omega_1)\,g(s/\omega_1). \] It is independent of \(\omega_2\).

So the comparison to the single-period premium \(g(s)\) is \[ \Pi_3\gtreqless g(s)\iff g(\omega_1)g(s/\omega_1)\gtreqless g(s). \] This is exactly the super-/sub-multiplicativity test at the factorization \(s=\omega_1,(s/\omega_1)\).

Regime B: \(\omega_1<s\le\omega_2\)

Here \(P(X=1\mid I_1)=1\), \(P(X=1\mid I_3)=0\), and \[ p_2:=P(X=1\mid I_2)=\frac{s-\omega_1}{\omega_2-\omega_1}\in(0,1]. \] Let \[ \alpha:=P(I_2\mid U\ge\omega_1)=\frac{\omega_2-\omega_1}{1-\omega_1}\in(0,1]. \] Then the time-1 conditional (on \(U\ge\omega_1\)) is two-point: value \(g(p_2)\) with probability \(\alpha\), and \(0\) otherwise, so \[ w=g(V_2\mid U\ge\omega_1)=g(p_2)\,g(\alpha). \] And \(V_1\) is two-point: it equals \(1\) with probability \(\omega_1\), and \(w\) with probability \(1-\omega_1\). Therefore \[ \Pi_3(\omega_1,\omega_2)=w+(1-w),g(\omega_1). \]

Key simplification (this is what makes optimization tractable): \[ p_2\,\alpha=\frac{s-\omega_1}{1-\omega_1}=:c, \] which is independent of \(\omega_2\).

So, for fixed \(\omega_1\), varying \(\omega_2\) varies the factorization \(c=p_2\alpha\).

Regime C: \(\omega_2<s\)

This is the survival mirror of Regime A (loss is almost sure on \(I_1\) and \(I_2\), uncertain only on \(I_3\)). It is convenient to write it in terms of survival probabilities and \(\check g\).

Let \(\bar s=1-s\), \(\bar\omega_2=1-\omega_2\). Then, on \(I_3\), the conditional loss probability is \[ p_3:=P(X=1\mid I_3)=\frac{s-\omega_2}{1-\omega_2}=1-\frac{\bar s}{\bar\omega_2}, \] so the conditional survival probability on \(I_3\) is \(\bar s/\bar\omega_2\).

If you run the same algebra as in Regime A but on the complement event \({U\ge\omega_2}\), you get the symmetric product form for the survival-side comparison: \[ 1-\Pi_3(\omega_1,\omega_2)=\check g(\bar\omega_2),\check g(\bar s/\bar\omega_2) \] when you take \(\omega_1\le\omega_2<s\) and ignore the redundant early split (you can make this exact by setting \(\omega_1=\omega_2\); with \(\omega_1<\omega_2\) the extra split sits entirely in the “loss is sure” region and does not change the survival calculation).

So the sign relative to \(g(s)\) is controlled by multiplicativity of \(\check g\) on factorizations of \(\bar s\).

Question 1: is \(\Pi_3\ge g(s)\) for all information, or can it be lower?

You already see the decisive point in Regime A:

If there exists \(x\in[s,1]\) with \(g(x)g(s/x)<g(s)\), then choosing \(\omega_1=x\) (and any \(\omega_2>\omega_1\)) produces a 3-period P2P premium strictly below the single-period premium: \[ \Pi_3=g(\omega_1)g(s/\omega_1)<g(s). \]

If \(g\) is supermultiplicative on \((0,1)\), meaning \[ g(xy)\ge g(x)g(y)\quad\text{for all }x,y\in(0,1), \] then Regime A never gives a reduction, and it forces \(\Pi_3\ge g(s)\) throughout Regime A.

Similarly, Regime C forces you to look at \(\check g\):

- If there exists a factorization \(\bar s=xy\) with \(\check g(x)\check g(y)<\check g(\bar s)\), then you can choose information (thresholds with \(\omega_2<s\)) that makes the 3-period premium smaller than the 1-period premium, via the survival-side product.

So, a clean necessary-and-sufficient style message is:

- “always \(\Pi_3\ge g(s)\) for all nested-threshold information” requires (at least) supermultiplicativity of \(g\) on factorizations of \(s\) and supermultiplicativity of \(\check g\) on factorizations of \(1-s\).

- if either one fails (even locally, at your given \(s\)), there exists a choice of information that makes \(\Pi_3<g(s)\).

Regime B can also produce reductions even if Regime A is neutral, but Regime A and Regime C already give you explicit counterexamples whenever multiplicativity fails in the relevant direction.

Question 2: if reductions exist, which information minimizes \(\Pi_3\)?

This becomes a one-dimensional factorization problem in each regime.

Global minimization strategy

Compute three candidate minima and take the smallest:

Regime A (choose \(\omega_1\in[s,1]\)): \[ \Pi_A^\star(s)=\min_{\omega_1\in[s,1]} g(\omega_1),g(s/\omega_1). \]

Regime C (choose \(\omega_2\in[0,s]\), survival-side): \[ \Pi_C^\star(s)=1-\min_{\bar\omega_2\in[\bar s,1]} \check g(\bar\omega_2),\check g(\bar s/\bar\omega_2). \]

Regime B (choose \(\omega_1\in(0,s)\), then optimize \(\omega_2\in[s,1]\)):

For fixed \(\omega_1\), recall \(c=(s-\omega_1)/(1-\omega_1)\in(0,1)\) and \[ \Pi_3(\omega_1,\omega_2)=g(\omega_1)+(1-g(\omega_1)),g(p_2),g(\alpha), \quad p_2\alpha=c. \] So, for fixed \(\omega_1\), the best choice of \(\omega_2\) is the best factorization of \(c\): \[ m(c):=\min_{x\in[c,1]} g(x),g(c/x), \quad\text{where }x=p_2,\ c/x=\alpha. \] Then \[ \Pi_B^\star(s)=\min_{\omega_1\in(0,s)} \Big(g(\omega_1)+(1-g(\omega_1)),m\big((s-\omega_1)/(1-\omega_1)\big)\Big). \]

Finally, \[ \min_{\omega_1<\omega_2}\Pi_3=\min{\Pi_A^\star(s),\Pi_B^\star(s),\Pi_C^\star(s)}. \]

Interpreting the minimizing information

In Regime A, the minimizer is a single number \(\omega_1^\star\) that gives the “best” factorization \(s=\omega_1(s/\omega_1)\) for the product \(g(\omega_1)g(s/\omega_1)\). Any \(\omega_2>\omega_1^\star\) works.

In Regime C, the minimizer is \(\omega_2^\star\) that gives the “best” factorization of \(1-s\) for the product in \(\check g\); then you pick any \(\omega_1<\omega_2^\star\).

In Regime B, for a chosen \(\omega_1\), the minimizer \(\omega_2\) is the one that makes \((p_2,\alpha)\) realize the minimizing factorization of \(c\) for the product \(g(p_2)g(\alpha)\). Concretely, \[ p_2=\frac{s-\omega_1}{\omega_2-\omega_1},\quad \alpha=\frac{\omega_2-\omega_1}{1-\omega_1}. \] So choosing \(\omega_2\) is exactly choosing the split of \(c\) into \((p_2,\alpha)\).

Without extra structure on \(g\) (for example, log-convexity/concavity of \(g\)), \(x\mapsto g(x)g(c/x)\) can minimize at an endpoint (\(x=c\) or \(x=1\)) or in the interior. The endpoint choices correspond to “put all the refinement into one step”:

- \(\omega_2=s\) gives \((p_2,\alpha)=(1,c)\),

- \(\omega_2=1\) gives \((p_2,\alpha)=(c,1)\), both yielding product \(g(c)\).

Interior minimizers correspond to genuinely using both dates of information.

5.4.1 P2P Literature

5.5 P2P in the literature

I did not find “P2P” or “policy to buy a policy” as a standard label, but the object is very much in the literature under names like backward iteration of premium principles, dynamic (iterated) risk measures, and time-consistent actuarial valuations.

Good “seminar references” that sit right on top of what you are doing:

Pelsser (2016), Time-consistent actuarial valuations. This is explicitly about taking familiar one-step actuarial premium principles and iterating them backward through time. (ScienceDirect)

Goovaerts et al. (2012), on Haezendonck-Goovaerts and (importantly for your story) what happens when you iterate distortion-type functionals; one takeaway is that iterative distortion risk measures collapse severely under strong time-consistency requirements. (Daniel Linders)

Shapiro (2012), Time consistency of dynamic risk measures (scenario trees). This is one of the cleanest statements of “composition in time is highly restrictive” for law-invariant coherent risk measures, which is exactly the kind of phenomenon you see when P2P comparisons end up hinging on sub-/super-multiplicative structure. (Optimization Online)

Bielecki, Cialenco, Liu (2023), Time consistency of dynamic risk measures generated by distortion functions. This is directly about conditional Choquet/distortion constructions in discrete time and what forms of time consistency they do and do not satisfy. (arXiv)

Bielecki et al. survey material on time consistency gives you the broader map (acceptance sets, strong vs weak time consistency, etc.), and it cites the core results (including the “only entropic survives” type messages under strong axioms). (math.iit.edu)

So: the P2P idea is standard, but the name is yours; it is “iterated premium principle / dynamic risk measure via backward recursion.”

5.6 Decoupled Marginal Cost (DMC) Model Pricing and Analysis

posts/050-files/dmc-analysis.qmd

5.6.1 DMC Is Always Bernoulli Time Expensive

The analysis of time expense for DMC is simpler than P2P: DMC is always BTE. The decoupled portfolio consists of business from the current accident period evaluated at \(t=1\) and the prior period evaluated at \(t=2\).

- The current accident period ultimate \(X_0 = 1_{{U_0<s}}\) for uniform \(U_0\),

- The prior accident period ultimate \(X_{-1} = 1_{{U_{-1}<s}}\), \(U_{-1}\) uniform independent from \(U_0\),

- $X :=

- \(R := \mathsf{P}(X_{-1}\mid G)\), the booked reserve at the intermediate time using best-estimate loss cost consistent with IFRS17,

- The decoupled portfolio \(Y := X_0 + R\), a new-period risk plus carried-forward reserve, with the “decoupled” step meaning \(X_0\) is independent of the reserve mechanism.

If we used

THIS FOLLOWS STRAIGHTAWAY from expected value reserving. In each state you are adding noise, so \(X\) second order stochastic dominates (SSD) \(X_1\), and spectral risk measures (generally law invariant coherent risk measures) respect SSD!

First, analyze the reserve random variable \(R\). Let \(A:={U_0<\omega}\), so \(\mathsf{P}(A)=\omega\) and \(G=\sigma(A)\).

In Case 1, when \(\omega<s\), then on \(A\) one has \(U_0<s\), so \(X_0=1\) surely, hence \[ R=\mathsf{P}(X_0\mid G)=1\quad\text{on }A. \] On \(A^c\), \(U_0\) is uniform on \([\omega,1]\), so \[ R=\mathsf{P}(X_0=1\mid A^c)=\frac{s-\omega}{1-\omega}=:r\quad\text{on }A^c. \] So \(R\in\set{1,r}\) with \(\mathsf{P}(R=1)=\omega\), \(\mathsf{P}(R=r)=1-\omega\), and \[ \mathsf{P}R=\omega\cdot 1+(1-\omega)r=s. \]

The DMC portfolio \(Y=X_1+R\) takes four values. Set \(r:=(s-\omega)/(1-\omega)\in(0,s)\), then \[ Y\in\set{r,1,1+r,2}, \] with probabilities \[ \begin{aligned} \mathsf{P}(Y=r) &= (1-\omega)(1-s),\\ \mathsf{P}(Y=1) &= \omega(1-s),\\ \mathsf{P}(Y=1+r) &= (1-\omega)s,\\ \mathsf{P}(Y=2) &= \omega s. \end{aligned} \]

Now let \(g\) be a distortion, and consider the associated pricing operator, \(g(Y)\). As usual, for a nonnegative loss \(Z\), the distortion price is the Choquet integral \[ g(Z)=\int_0^\infty g(\mathsf{P}(Z>t)),dt. \] Here the tail probabilities at the relevant cutpoints are \[ \begin{aligned} \mathsf{P}(Y>r)&=:a=s+\omega(1-s)=s+\omega-s\omega,\\ \mathsf{P}(Y>1)&=s,\\ \mathsf{P}(Y>1+r)&=\omega s. \end{aligned} \] Since the levels are \(0<r<1<1+r<2\), the integral collapses to four rectangles: \[ g(Y) = r + (1-r)\,g(a) + r\,g(s) + (1-r)\,g(\omega s), \] giving a useful closed-form expression.

The DMC premium is net of reserves (to avoid double-counting carried reserves), and is given by \[ \Pi_{\mathrm{DMC}}(\omega):=g(Y)-\mathsf{P}X_0=g(Y)-s. \] The one-period premium for a unit Bernoulli loss is \[ \Pi_1 := g(X_1)=g(s). \] The difference, measuring time expense, is \[ \Pi_{\mathrm{DMC}}(\omega)-\Pi_1 = \frac{1-s}{1-\omega}\,\Big(g(a)+g(\omega s)-g(s)-\omega\Big). \] Because \((1-s)/(1-\omega)>0\), the sign is completely driven by the single inequality \[ g(s+\omega(1-s)) + g(\omega s) \;\;\gtreqless\;\; g(s) + \omega. \] Define the risk-loading function [NOT IDEAL NOTATION] [also TAU somewhere?] \[ h(u):=g(u)-u. \] Then use \(a+\omega s=s+\omega\) to get \[ g(a)+g(\omega s)-g(s)-\omega = h(a)+h(\omega s)-h(s), \] so \[ \Pi_{\mathrm{DMC}}(\omega)-g(s) = \frac{1-s}{1-\omega}\,\Big(h(a)+h(\omega s)-h(s)\Big). \] Then, in Case 1, the comparison of DMC and one-period pricing reduces to whether the risk loading \(h\) satisfies \[ h\big(s+\omega(1-s)\big) + h(s\omega)\;\;\gtreqless\;\; h(s). \] This question has a clean answer as we see in Proposition 5.2. First we work out Case 2.

In Case 2, \(\omega>s\). Now \(:A={U_0<\omega}\) so \(P(A)=\omega\), and \(B={U_1<s}\) so \(P(B)=s\), independent. The conditional mean reserve:

- On \(A\): \(U_0|A\sim\mathrm{Unif}[0,\omega]\), so \[ R=P(X_0=1|A)=P(U_0<s|A)=s/\omega=:q. \]

- On \(A^c\): \(U_0|A^c\sim\mathrm{Unif}[\omega,1]\) and \(\omega>s\), so \(R=0\).

So \(R\in\set{q,0}\) with \(P(R=q)=\omega\), \(P(R=0)=1-\omega\), and \(PR=s\) (since \(\omega q=s\)). The DMC portfolio \(Y=X_1+R\) takes values in \(\set{0,q,1,1+q}\) with the obvious product probabilities. The relevant tails at cutpoints \(0<q<1<1+q\) are \[ \begin{aligned} P(Y>0)&=1-P(Y=0)=1-(1-s)(1-\omega)=s+\omega-s\omega, \\ P(Y>q)&=P(X_1=1)=s, \\ P(Y>1)&=P(X_1=1,R=q)=s\omega. \end{aligned} \] Therefore the distortion price is \[ g(Y)=q\,g(s+\omega-s\omega)+(1-q)\,g(s)+q\,g(s\omega). \] Comparing the DMC premium to one-period premium \(\Pi_1=g(s)\) gives \[ \Pi_{\mathrm{DMC}}(\omega)-\Pi_1 =q\Big(g(s+\omega-s\omega)+g(s\omega)-g(s)-\omega\Big). \] Equivalently, with \(h(u)=g(u)-u\) and using \((s+\omega-s\omega)+s\omega=s+\omega\), \[ \Pi_{\mathrm{DMC}}(\omega)-g(s)=q\Big(h(s+\omega-s\omega)+h(s\omega)-h(s)\Big). \] So Case 2 has the same sign driver as Case 1: \[ g(s+\omega-s\omega)+g(s\omega)\gtreqless g(s)+\omega \] (or the \(h\) version). Combining the two we get:

Proposition 5.2 For Bernoulli risks and simple information as above, the DMC price is greater (less) than the one-period price CASE 1 if and only if \(g\) is concave (convex).

Proof. Note the two affine decompositions \[ a = (1-\omega)s+\omega\cdot 1,\qquad \omega s=\omega\cdot s+(1-\omega)\cdot 0. \]

If \(h\) is concave on \([0,1]\) (equivalently, \(g\) is concave), then Jensen’s inequality gives \[ h(a)\ge (1-\omega)h(s)+\omega h(1)=(1-\omega)h(s), \] and \[ h(\omega s)\ge \omega h(s)+(1-\omega)h(0)=\omega h(s). \] Adding gives \[ h(a)+h(\omega s)\ge h(s), \] so \[ \Pi_{\mathrm{DMC}}(\omega)\ge g(s)\quad\text{for every }\omega\in[0,s]. \]

If \(h\) is convex on \([0,1]\) (equivalently, \(g\) is convex), all inequalities reverse, so \[ \Pi_{\mathrm{DMC}}(\omega)\le g(s)\quad\text{for every }\omega\in[0,s]. \]

Introducing reserve randomness (a mean-preserving perturbation away from the constant reserve \(s\)) pushes the DMC premium up for concave distortions, and down for convex distortions.

Remark 5.3 (Union/intersection of independent events). Let \(B={X_1=1}\) with \(\mathsf{P}(B)=s\) and \(A={U_0<\omega}\) with \(\mathsf{P}(A)=\omega\), independent.

Then \[ \mathsf{P}(A\cap B)=\omega s,\qquad \mathsf{P}(A\cup B)=s+\omega-s\omega=a. \] So the sign driver is \[ g(\mathsf{P}(A\cup B))+g(\mathsf{P}(A\cap B)) \;\;\gtreqless\;\; g(\mathsf{P}(B))+\mathsf{P}(A). \] It is a “capacity additivity defect” statement, but with the second marginal not distorted (it is \(\omega\), not \(g(\omega)\)). Rewriting it as \(h(a)+h(\omega s)\gtreqless h(s)\) makes that asymmetry explicit: only the risk-loading part of \(g\) matters. Compare: the capacity \(c=g\,\mathsf P\) is sub- or super-modular exactly when \(g\) is concave/convex.

Since \(\omega=0\) gives a deterministic reserve \(R\equiv s\), one has equality \(\Pi_{\mathrm{DMC}}(0)=g(s)\).

If \(g\) is differentiable (use right-derivatives at \(0\) if needed), define \[ H(\omega):=g(s+\omega(1-s))+g(s\omega)-g(s)-\omega, \] so \(\Pi_{\mathrm{DMC}}-g(s)\) has the same sign as \(H\).

Then \[ H'(0)= (1-s)g'(s) + s g'(0+)-1. \] So the immediate direction of the DMC effect from “turning on” a small amount of information is set by the combination of the local slope at \(s\) and the near-zero slope.

This is a nice place to connect to your elasticity discussions, since \(g'(0+)\) and \(g'(s)\) encode “how expensive it is to move a little probability mass” at those points.

5.6.2 DMC Over Three or More Periods

No change. Really easy. Allocation straight-forward and built in.

5.6.3 DMC Literature

I did not find “decoupled marginal cost” or “DMC” as a named method in the literature. My sense is that the label is home-brewed, but the ingredients are not. What you call DMC lines up with established strands:

- marginal cost of risk / Euler allocation / gradient allocation, including explicitly multi-period settings

Bauer and Zanjani (work circulated as 2011 and also in CAS material) develop marginal cost of risk and connect it to Euler allocation, with multi-period interpretation showing up in CAS-facing versions. (University of Ulm)

Denault (2001), Coherent allocation of risk capital: foundational for why coherent risk measures + marginal contributions give “economically sensible” allocations. (ressources-actuarielles.net)

Guo (2021), Capital allocation techniques: review and comparison (Variance): a modern survey that places Euler/marginal methods in a broader allocation taxonomy. (variancejournal.org)

- distortion-based allocation in insurance (very close to your setup, but framed as allocation rather than “decoupling”)

- Tsanakas (2004), Dynamic capital allocation with distortion risk measures: this is probably the closest single citation in spirit, because it is explicitly dynamic and explicitly distortion-based in an insurance context. (City Research Online)

- accounting-style “best estimate + risk margin” context (IFRS 17 / cost of capital / risk adjustment)

- Practitioner/actuarial notes on IFRS 17 risk adjustment and cost-of-capital style risk margins are consistent with your “subtract the mean; price the margin” decomposition, even if they do not use your DMC construction. (Institute and Faculty of Actuaries)

So: DMC as a named method seems novel, but it sits naturally at the intersection of (i) dynamic distortion risk measurement, (ii) marginal/Euler capital allocation, and (iii) best-estimate-plus-margin accounting decomposition.

If you want to position DMC in a literature review, the cleanest claim is:

- “DMC is an internally consistent construction for allocating the risk margin (above best estimate) in a steady-state multi-period setting; it is closely related to Euler/marginal capital allocation and to dynamic capital allocation under distortion risk measures, but I have not found it presented in this decoupled steady-state form or under this name.”

That is accurate relative to what I can verify from the sources above.

5.7 Reconciliation between DMC and P2P

posts/050-files/reconciliation-dmc-p2p.qmd

DMC and P2P are two multi-period constructs that answer different questions:

P2P is a replicating-policy viewpoint: price the random reserve booked at time 1 by pulling it back to time 0 with the same pricing functional.

DMC is an accounting-consistent marginal-cost viewpoint: treat best-estimate reserves as “funded” and price only the incremental risk margin created by reserve uncertainty, using a decoupled steady-state portfolio.

That difference maps cleanly to two internal problems: front-line pricing of policies vs top-down allocation of capital and margin.

5.7.1 P2P

P2P is a policy is “a policy to buy a policy later”: at time 0 you buy the right/obligation to pay the time-1 booked reserve, which itself is random because of emergence. You price that random reserve using the same machinery you use to price liabilities.

P2P Strengths

Directly tied to a transaction story: what is the premium today for a contract whose eventual booked reserve is random?

Naturally integrates information design: you can ask whether earlier learning increases or decreases premium, and what information is valuable.

Captures time-consistency questions: the recursion forces you to confront whether your pricing functional composes sensibly over time.

Useful for product design and underwriting: it makes explicit when “better info” makes the product cheaper/more expensive, which aligns with selection, pricing segmentation, and monitoring.

Clean conceptual link to hedging/replication: you can explain it to finance-minded stakeholders as pricing a random future obligation.

P2P Weaknesses

Sensitive to the chosen time-consistency convention: many distortions are not dynamically consistent, so P2P can behave in ways that feel unintuitive unless you explicitly commit to a dynamic framework.

The inequality drivers can be more technical (sub/super-multiplicativity, plus survival dual effects), which can be harder to sell internally.

It can mix “pricing” and “information policy” in a way that regulators/accounting may not want: accounting wants best estimate plus explicit margin, not an implicit “value of waiting”.

Less obviously aligned with IFRS 17 mechanics unless you carefully map each step to contractual service margin, risk adjustment, and discounting.

P2P Use Cases

Pricing problems where the firm truly has an option-like feature: repricing, cancellations, adjustable terms, experience refunds, retrospective rating, or explicit mid-term premium adjustments.

Underwriting governance: deciding what info to collect, when, and how it changes price.

Product and portfolio steering: what lines benefit from earlier emergence, and why.

5.7.2 DMC

In steady state, new business and carried reserves coexist. The best estimate reserve is not “profit”; it is funding for expected future cash flows. The object of interest is the risk margin created by uncertainty around those reserves, priced marginally and allocated to units.

DMC Strengths

Strong alignment with accounting decomposition: “premium = best estimate + margin” is exactly how internal finance teams want to talk, and DMC makes the subtraction of the mean explicit.

Robust monotonicity story: when the decoupling independence assumption is appropriate and you use a coherent spectral/concave distortion, adding mean-zero reserve noise increases the risk margin. That is easy to explain and hard to argue with.

Naturally suited to top-down allocation: you can interpret the DMC margin as the incremental cost of carrying volatility from the past while writing new business.

Cleaner levers: curvature/convex-order behavior drives direction, so fewer fragile edge cases than P2P.

Operationally interpretable: “what does this line contribute to group risk margin given the group’s pricing functional?” is exactly the allocation question.

DMC Weaknesses

Depends on the decoupling assumption: if new business and reserve development are correlated (common inflation, legal environment, catastrophes, claims operations), then “independent copy” can understate diversification drag or overstate it, depending on correlation sign.

More of an internal cost model than a market price model: it is excellent for allocating financing costs, but it is not automatically the right customer-facing premium unless you also model competitive/market constraints.

Can underrepresent option-like features: if the firm can reprice at renewal or adjust terms after observing emergence, DMC does not automatically capture that “control”.

Steady-state assumption is strong: it works best when the portfolio mix and development patterns are stable; transitions and growth/shrinkage need explicit adjustments.

DMC Use Cases

Capital and margin allocation, performance measurement, and steering: allocate group financing costs to units, and penalize lines that generate long-tailed volatility.

Managerial accounting: allocating risk margin to accident years, lines, or underwriting cells, consistent with “best estimate + risk adjustment”.

Planning and budgeting: cost of growth in long-tailed lines, and “drag” from legacy reserves.

5.7.3 Insurance Applications

For an insurer pricing business and allocating top-down financing costs to units; some units are capacity-short or strategically favored. For that problem, DMC is the better default:

It aligns with internal finance language and with IFRS-like decomposition.

It behaves monotonically under coherent spectral pricing in a way that supports defensible allocation rules.

It directly targets “marginal cost of carrying volatility,” which is what group financing cost allocation is trying to measure.

P2P belongs as a companion model:

Use P2P when management decisions or contract features create real intertemporal optionality: repricing ability, early settlement strategies, commutation, or underwriting that changes after time-1 information.

Use P2P as an “information value and control value” diagnostic on top of DMC: it tells you when earlier emergence changes cost because it changes what you will do (or can do), not merely because it changes the distribution.

DMC is the baseline internal pricing, hurdle rates, and allocation of group financing costs across units and accident years. P2P is a strategic overlay for units where action after emergence is material (renewal repricing, claims settlement policy, reinsurance optimization, and any line with strong mid-course management).

In governance terms: DMC is the accounting-consistent cost-of-risk engine; P2P is the decision-and-information engine.

dup; rationalize

Use DMC as the primary enterprise method: pricing-to-allocate inside a going concern, consistent with best estimate plus risk margin language, and operationally implementable with a triangle-like data layout.

Use P2P as a “block valuation / contract valuation” method and as a diagnostic for information value and dynamic effects when you really are pricing a finite object (multi-year cat bond, long-tail treaty with limited ability to reprice, runoff commutation decisions, etc.).

Treat P2P as a stress test against DMC: when P2P and DMC disagree strongly on a block, that flags material dynamic optionality (repricing/management actions, timing of emergence, information flow). For enterprise allocation you still default to DMC; for special structures you may carve out the block and value it with P2P.

This division of labor also reads well to a skeptical audience: DMC is the practical management accounting engine; P2P is the theoretically clean valuation operator you apply when “this thing is a block” is actually true.

5.7.4 Mechanics

DMC is a steady-state calendar-year picture. At time 0 you already have:

- prior accident-year reserves rolling forward,

- plus new accident-year business entering.

So the portfolio is “stationary”: as old cohorts run off, new cohorts replace them. That makes DMC naturally suited to ongoing pricing, allocation, and performance measurement of a going concern.

P2P, as you are using it, is a single-cohort valuation run backward through time. You start with a set of liabilities, you condition on emergence, and as time advances those liabilities resolve and disappear. Unless you explicitly add new cohorts, the portfolio shrinks. That makes P2P naturally suited to valuing a block (or a contract) rather than running a steady-state enterprise.

So your sentence is right: DMC “has all the bits” because it is built on a triangle/composition that already includes replacement of run-off by new business. P2P needs that replacement added as an explicit modeling choice.

We can reconcile them in two clean. First, use a cohort-indexed P2P in steady state. Let there be accident years \(k\in\mathbb{Z}\), and at calendar time \(t\) the firm holds a stack of cohorts \({k\le t}\) at various development ages.

Define a P2P recursion for each cohort’s remaining liability, and define the firm-level “time-\(t\) reserve random variable” as the sum across all open cohorts.

Then the P2P valuation at time \(t\) is applied to the whole stack. When a cohort pays out and disappears, a new cohort is added (the next accident year). In stationary conditions, the distribution of the stacked reserve vector becomes time-homogeneous.

This is the direct analog of “steady state P2P,” and it removes the disappearing-units issue.

Operationally: P2P becomes a rolling valuation operator applied each period to the whole reserve stack, not a one-off valuation of a single block.

Second, interpret P2P as a unit-level pricing overlay, not an enterprise model. Keep P2P as answering: “what is the time-0 value of writing one new policy (or one new cohort) given how emergence affects the booked reserve at time 1 and later?”

Then the enterprise steady state is constructed by summing across many such new policies written each period (a stream of cohorts). That sum is exactly what DMC is built to represent.

So P2P remains local (contract/cohort pricing and information value), and DMC remains global (steady-state allocation and financing cost).

These differences matter for allocation. If you allocate top-down costs:

- DMC gives you stable per-unit charges because the unit is always present in the steady-state stack.

- P2P on a single cohort yields charges that vanish as the cohort runs off, which is appropriate for block valuation but can look odd as an operating-unit charge unless you replace it with “the stream of new cohorts written by that unit.”

So a fair P2P-based allocation at the unit level typically allocates to the unit’s pipeline (its expected future new business) as well as its current in-force, not just its existing runoff.

In conclusion,

- The “disappearing units” effect is intrinsic to P2P if you apply it to a fixed block.

- To make P2P comparable to DMC as an enterprise allocation tool, you either (i) add cohort replacement explicitly (steady-state P2P), or (ii) treat P2P as a marginal new-business overlay whose enterprise implication comes from aggregating across a rolling stream of cohorts.

5.7.5 P2P is an operator; DMC is an allocation system

P2P is a mapping from “a cash-flow profile with an information structure” to “a time-0 value,” built by backward recursion. That makes it ideal for valuing a standalone object: a block, a treaty, a cat bond with multi-year emergence, a runoff portfolio, or anything where “this thing runs off and ends” is the correct ontology.

DMC is built to live inside a going concern. It prices and allocates the incremental margin inside a calendar-year container that already includes:

- the current underwriting year,

- the carried reserves that affect this year’s financing cost,

- and the natural replacement of runoff with new business (steady state).

So DMC is not just a valuation; it is a rule for splitting the group’s financing cost across units and time.

Why “rolling P2P” is usually not worth it for enterprise allocation

You can absolutely create a stationary P2P enterprise model by adding a stream of new cohorts and specifying their joint dependence with legacy development, underwriting mix, growth, rate changes, reinsurance, and operational responses. But that forces you to assume a full generative model of the insurer through time.

That is the core problem: P2P becomes a large structural model of the business, not just a pricing rule. If you are using it for top-down allocation, you are implicitly building a theory of the future book. The output then depends more on those structural assumptions than on the pricing functional itself.

DMC avoids that. It is local in time: it needs “what is in this calendar year” (new business distribution and the reserve stack you carry into the year), and it charges margin accordingly. It is much less hostage to assumptions about future volume and mix.

Why DMC feels like the “linearization” you want

Your description is exactly right: DMC replaces the realized, path-dependent accident-year reserve state with the expected reserve under the relevant information, and it then charges a risk margin for uncertainty around that best estimate.

That is why it is stable: it decouples pricing from “happenstance where reserves are currently up.” You allocate based on structural risk, not on the current draw from the stochastic process.

In that sense, DMC behaves like a linear (or linearized) marginal cost rule inside a nonlinear pricing world: you take the nonlinear price functional and apply it to the incremental risk components in a controlled, steady-state way.

5.8 Proofs

posts/050-files/proofs.qmd

All deferred proofs.

5.9 Literature and Context

posts/050-files/literature.qmd

Here’s how the literature thinks about things.

From Bielecki et al. (2017). Other refs

- Comonotonicity: Dhaene et al. (2002)

- Conditional comonotonicity: Jouini and Napp (2004), Cheung (2007), Cheung (2012).

- SRMs: Föllmer and Schied (2016), Kusuoka (2001)

- Probability: Hoffmann-Jørgensen (1994b), Hoffmann-Jørgensen (1994a)

- Multi-period risk measures: Bielecki et al. (2024)

Definition 5.2 (Dynamic risk measures) A family of functions \(f_t\) is

- Adapted: appropriately measurable

- Normalized: value of \(0\) is \(0\)

- Local \(1_Af_t(X)=1_Af_t(1_AX)\)

- Cash additive \(f_t(X+m)=f_t(X)+m\), \(m\) is \(t\)-measurable

- Monotone if \(X\le Y\implies f_t(X)\le f_t(Y)\)

- Sub-additive

- Positive homogeneous

- Quasi concave

- Law invariant

Bielecki et al. (2017) intro

The main idea behind this type of time consistency is that if “tomorrow”, say at time \(s\), we accept \(X \in L^p\) at level \(\phi_s(X)\), then “today”, say at time \(t\), we would accept \(X\) at any level less than or equal to \(\phi_s(X)\), adjusted by the information \(F_t\) available at time \(t\). Similarly, if tomorrow we reject \(X\) at level \(\phi_s(X)\), then today, we should also reject \(X\) at any level greater than or equal to \(\phi_s(X)\), adapted to the information \(F_t\).

Definition 5.3 (Notions of consistency)

- Strong time consistency: \(\rho_{t+1}(X)=\rho_{t+1}(Y)\implies \rho_{t}(X)=\rho_{t}(Y)\).

- Recursive time consistency: \(\rho_t(X) = \rho_t(-\rho_{t+1}(X))\).

- Local (Scandolo)

- (Weak) Acceptance time consistency: acceptable in the future means acceptable now, ${t+1}(X){t}(X)

- (Middle) Rejection time consistency: preferred in the future implies preferred now \(\rho_{t+1}(X) \ge \rho_{t+1}(Y) \implies \rho_{t}(X) \ge \rho_{t}(Y)\), note these are inequalities of functions that must hold a.s.

- Sub-martingale time consistent: \(\rho_t(X) \ge \mathsf E[\rho_{t+1}(X)\mid \mathscr F_t]\)

- Super-martingale time consistent: \(\rho_t(X) \le \mathsf E[\rho_{t+1}(X)\mid \mathscr F_t]\)

Acceptance: accept a risk at any price \(\ge\) model price \(\rho_t(X)\).

Acceptance index = \(p\) from TVaR, higher \(p\) more acceptable

Only mean, max, and entropic are STC, so anything else can fail

\(X\) and information are tied, generally information \(I\) and \(X=X(I)\) (mixture, compounds)

Information is irrelevant = independent

Information is fully informative

Both these lead to the same price

Information changes assessment of probabilities of events, not outcomes. Does change possible outcomes.

Remember what’s a function and what’s a number!

5.9.1 Acceptance Sets

- \(\Omega\) set of scenarios

- \(X:\Omega\to\mathbb R\) a financial position, \(X(\omega)\) is the discounted net worth of the position of the end of the period if the scenario \(\omega\) is realized

- AKA P&L

- Quantify the risk of \(X\) by some number \(\rho(X)\) for \(X\in \mathcal X\)

- Monotone: if \(X\le Y\), then \(\rho(X) \ge \rho(Y)\), (notice swap)

- Cash invariance: for \(m\in \mathbb R\), then \(\rho(X+m)=\rho(X)-m\) (again, notice sign)

- AKA translation invariance

- QUOTE (Föllmer and Schied 2016, sec. 4.1) TI is motivated by the interpretation of \(\rho(X)\) as a capital requirement, i.e., \(\rho(X)\) is the amount which should be added to the position \(X\) in order to make it acceptable from the point of view of a supervising agency. Thus, if the amount \(m\) is added to the position and invested in a risk-free manner, the capital requirement is reduced by the same amount. In particular, cash invariance implies \(\rho(X + \rho(X))=0\) and \(\rho(m) = \rho(0) - m\) for all \(m\in\mathbb R\). For most purposes it would be no loss of generality to assume that a given monetary risk measure satisfies the condition of Normalization: \(\rho(0) = 0\).

- SM Analogously, thinking of \(\rho\) as a pricing metric, \(\rho(X)\) is the amount that should be added to the position \(X\) in order to make it acceptable from the point of view of an underwriter or management.

- Corresponds to an economically meaningful and observable (from an arms-length transaction) as opposed to a meaningless (all capital stands behind all risks) and unobservable (never booked anywhere, a notional allocation) construct.

- And you still don’t have the premium even after you allocate capital because you don’t know the cost of capital, which varies by layer!

- Acceptable risks \(\mathcal A_\rho= \{ X\mid \rho(X)\le 0 \}\), positions that do not require more assets to be acceptable.

- Get FS Theorem 4.6.

Theorem 5.1 (Pricing Metrics and Acceptance Sets (Föllmer and Schied (2016) Thm 4.6)) Suppose tha \(\rho\) is a monetary risk measure with acceptance set \(\mathcal A=\mathcal A_\rho\).

- \(\mathcal A\) is nonempty, closed in \(\mathcal X\) with respect to the supremum norm \(||\cdot ||\), and satisfies the following two conditions; \[ \inf \{ m\in\mathbb R\mid m\in \mathcal A \} > -\infty \quad X\in \mathcal A,\ Y\in\mathcal X,\ Y\ge X \implies Y\in \mathcal A. \]

- \(\rho\) can be recovered from \(\mathcal A\) \[ \rho(X) = \inf \{m \in\mathbb R\mid m + X \in\mathcal A\}. \]

- \(\rho\) is a convex risk measure if and only if \(\mathcal A\) is convex.

- \(\rho\) is positively homogeneous if and only if \(\mathcal A\) is a cone. In particular, \(\rho\) is coherent if and only if \(\mathcal A\) is a convex cone.

5.9.2 Dynamic risk measures and time consistency

- FS Sec 11.1: \(\mathcal A_t = \{X \mid \rho_t(X) \le 0\}\), “can thus be viewed as the conditional capital requirement needed at time \(t\) to make a financial position \(X\) acceptable at that time.”

- SM interpret \(\rho_t(X)\) as the amount that should be added to the position \(X\) in order to make it acceptable at time \(t\) from the point of view of an underwriter or management.

Definition 5.4 Strong time consistent if \(\forall X,Y,t\ge 0\) \[ \rho_{t+1}(X) \le \rho_{t+1}(Y) \implies \rho_{t}(X) \le \rho_{t}(Y). \]

Lemma 5.1 Strong time consistency is equivalent to each of

- \(\rho_{t+1}(X) = \rho_{t+1}(Y) \implies \rho_{t}(X) = \rho_{t}(Y)\).

- Recursiveness: \(\rho_t = \rho_t(-\rho_{t+1})\) for \(t=0,1,\dots, T\).

Proof. STC implies (a): if \(a = b\) then \(a \le b\) and \(b\le a\).

implies (b): \(\rho_{t+1}(-\rho_{t+1}(X))=\rho_{t+1}(X)\) because \(\rho_{t+1}(X)\) is known at \(t+1\) and so can be treated as a constant. Applying a) with \(Y=-\rho_{t+1}(X)\) gives \(\rho_t(X)=\rho_t(-\rho_{t+1}(X))\).

implies TC: if \(\rho_{t+1}(X)\le\rho_{t+1}(Y)\) then \(\rho_t(-\rho_{t+1}(X))\le \rho_t(-\rho_{t+1}(Y))\) by monotonicity, and so \(\rho_t(X)\le \rho_t(Y)\) by (b).

The one-step walk-forward in b) can be extended to \[ \rho_s = \rho_s(-\rho_t) \] for \(0\le s<t\).

Example 5.3 The following are STC.

- The mean

- The maximum

- \(\rho_t(X) = \displaystyle{1}{\beta} \log \mathsf E[e^{-\beta X}\mid \mathscr F_t]\).

In fact, CHECK! Kupper and Schachermayer (2009) shows these are the only STC LI risk measures(!). The mean is \(\beta=0\) and the max is \(\beta=\infty\).

Example 5.4 If \(\rho_t\), \(t=0,\dots,T\) is a sequence of XX conditional risk measures then the recursive definition \[ \tilde \rho_T = \rho_T\quad\text{and}\quad \tilde \rho_t= \tilde \rho_t(\tilde \rho_{t+1}) \] defines a time-consistent sequence of conditional risk measures. Thus P2P is time-consistent. This definition takes away any choice about how to “pull-back” a future time to the present.

Very standard examples showing TVaR is not STC. Info is sigma algebra generated by one of the variables (A+B model)

Remove one-step conditions.

Convert into stopping times.

Given any sequence of \(\rho_t\) define the recursive \(\tilde\rho_T=\rho_T\) and \(\tilde \rho_t = \rho_t(-\tilde\rho_{t+1})\) (P2P approach), Ex 11.2.3.

Define the one-step-ahead acceptance set by \[ \mathcal A_{t,t+1} = \{ X\in L^\infty_{t+1}\mid \rho_t(X) \le 0 \}. \] These are risks whose outcome is known by the end of the next period and that are currently acceptable.

Lemma 5.2 Let \((\rho_t)_t\) be a sequence of monetary conditional risk measures (cond pos homog, convexity applies for risk in \(L_t\)). Then the following equivalences hold for all \(t\) and \(X\)

- \(X\in \mathcal A_{t,t+1} + \mathcal A_{t+1}\iff -\rho_{t+1}(X)\in\mathcal A_{t+1}\)

- \(\mathcal A_t\subseteq \mathcal A_{t,t+1} + \mathcal A_{t+1}\iff \rho_t(-\rho_{t+1}) \le \rho_t\)

- \(\mathcal A_t\supseteq \mathcal A_{t,t+1} + \mathcal A_{t+1}\iff \rho_t(-\rho_{t+1}) \ge \rho_t\)

Proof. Coming soon.

234 = Roorda and Schumacher (2007), 271=Tutsch (2008), 274=Weber (2006).

Hence \(\rho_t\) is STC iff \(\mathcal A_t = \mathcal A_{t,t+1} + \mathcal A_{t+1}\).

Definition 5.5 \(\rho_t\) is weak time consistent if \[ \rho_{t+1}(X)\le 0\implies \rho_t(X)\le 0. \]

Then WTC \(\iff \mathcal A_{t+1}\subseteq \mathcal A_t\).

5.9.3 The Coherent Case

Stable if pasting of two measures (at a random time) is still in the set. See p.344 of book.

Föllmer and Schied (2016) Thm 11.22. says that if \(\rho_t\) is a sensitive sequence of convex conditional risk measures and \(\rho_0\) is coherent then the following are equivalent:

- \(\rho_t\) is STC

- It is represented as the sup over a stable set of measures.

In particular, each \(\rho_t\) is coherent.

5.10 Policy to Buy a Policy

posts/050-files/p2p.qmd

This section presents an alternative approach to multi-period pricing: the policy to buy a policy. It is conceptually orthogonal to the approach we take, looking at a single risk over time rather than slices of risk in a single year, but aligns with much of the multi-period pricing literature and it is presented for that reason. It also serves to highlight the complexities of the standard approach, leading to stochastic-on-stochastic (SoS) simulations, that our proposed method avoids.

5.10.1 Context and Approach

We can price a multi-period risk using a single-period risk pricing rule by buying a policy to buy another policy to move between periods. In a two-period model, at the start of the second period certain information is revealed that necessitates the reevaluation of the liability. At this juncture, the company faces a one-period risk based on the revised information, which can be priced in the market using a one-period pricing rule. By purchasing a policy whose payout equals the distribution of these prices, we can transfer the risk across two periods. At inception, we buy a policy to buy another policy; it matures at the end of the first period with a payout exactly equal to the amount required to buy a second policy to transfer the risk in the second period. It is important to note that the second period’s risk is contingent upon (is a function of) the information revealed during the first period.

In detail, consider a two-period risk where partial information is revealed at \(t=1\) and the final outcome at \(t=2\). Assume we are given an SRM that prices one-period risk. We define the associated policy to buy a policy (P2P) price of the two-period risk to be the price at \(t=0\) of a policy whose payout at \(t=1\) equals the cost of the insurance for the second period, given the emerged information. The SRM is used to determine the second period price, conditional on the information. This random variable, in turn, is priced by the SRM over the first period. This mechanism can be extended to arbitrarily many periods, but we consider only the two period setting.

We investigate the impact of partial information by comparing the P2P price and the single-period price using the underlying SRM. We call a risk time expensive for the SRM if the P2P price is greater than or equal to the SRM price and time cheap otherwise. Time expensive risks cost more to insure over two periods; time cheap ones cost less. The time expense (or cost, if negative) is the difference. What can we say about the time expense for different multi-period models?

Here are the properties of this “P2P”, policy to buy a policy.

Definition: P2P is a financial instrument purchased at \(t=0\) that allows the holder to purchase a subsequent insurance policy (Policy B) at time \(t = 1\) based on new information available at that time.