Compiled: 2026-03-09 17:10:48.4108495004 Distortions and Single-Period Pricing

posts/040-distortions-single-period.qmd

In a single period there is no emergence, but there is discount. Start with discount = 0, then see 090. Explain the PIR textbook model.

4.1 Single-Period Pricing

posts/040-files/010-single-period-pricing.qmd

point · point

This section summarizes the approach to single-period pricing outlined in PIR and developed further in CMM. It assumes the insurance market has four interacting entities: insureds, insurers, investors and a regulator, as displayed in Figure 4.1.

InsCo is a limited liability company that intermediates between insureds and investors. InsCo’s customers are insureds (policyholders) who are subject to risks they wish to insure. Insureds who use insurance for risk transfer or financing are sensitive to insurer quality and possible default because it correlates with their own misfortune.

Insurance legal entities serve two principal purposes. First, to provide statutory insurance such as mandatory automobile liability. Here, the regulator exists to ensure cover is effective. Second, to allow insureds to pool together and benefit from diversification without requiring onerous bilateral contracts. They do this through insolvency rules, which provide the framework under which unrelated insureds interact in the unlikely event of an insolvency.

InsCo comes into existence at time \(t=0\) and lasts for one period. InsCo has no initial liabilities. At \(t=0\) it writes one or more single-period insurance contracts and collects premiums from its insureds.

When InsCo writes a policy, it collects premium at \(t=0\) and earns it over the period. All other transactions occur at the end of the period. Therefore all the premium is earned and available to pay claims at \(t=1\). If InsCo’s ending assets \(a\) are insufficient to pay the claims, then it defaults.

InsCo has promised to pay policyholders claims under various contingencies, with the aggregate promise represented by the random variable \(X\ge 0\). If \(X>a\), then only \(a\) gets paid out, i.e., the actual payments are the minimum of \(X\) and \(a\), which we write as \(X\wedge a\). We assume the probability distribution of \(X\) is known.

InsCo is owned by investors who provide risk bearing capital. Investors are also risk averse. At time \(t=0\), as well as collecting premiums, InsCo also raises capital from investors by selling them its uncertain \(t=1\) residual value. That is, at time \(t=1\), InsCo pays any claims due in the amount of \(X \wedge a\) and pays any residual value \((X-a)^+\), if it exists, to its investors as return of capital plus a dividend or investment return. If InsCo’s ending assets are insufficient to pay the claims, \(X>a\), then it defaults. Investors have limited liability: they may lose their original investment but owe nothing more.

Premiums cover expected losses and loss adjustment expenses, and the cost of capital including frictional capital costs. All other expenses are outside our model.

Symbolically, at time \(t=0\), InsCo collects premiums \(P\) from policyholders and capital \(Q\) from investors. These are the only sources of funds and comprise the total assets via the funding equation: \[ a = P+Q. \tag{4.1}\] Two important questions arise from InsCo’s promises to pay.

- Are there sufficient assets to honor those promises?

- Are investors being adequately compensated for taking on those risks?

Crucially, we need to talk about not one but two different risk measures to answer these questions.

Question 1 concerns risk tolerance and is answered by the Capital Adequacy module. It determines the assets necessary to back an existing or hypothetical portfolio at a given level of risk. This exercise can also be reverse-engineered: given existing or hypothetical assets, what constraints on business does the risk tolerance entail? Alternatively, given business and capital what is the implied risk tolerance?

Assets \(a\) and liabilities \(X\) are related by some rule driven by a combination of regulatory authorities, rating agencies, and InsCo’s own internal risk management policies, representing a risk tolerance. Such a rule we call a capital risk measure and we may write \(a\) as a functional \(a(X)\). Value at Risk (VaR) or Tail Value at Risk (TVaR) at some high confidence level, such as 99.5 percent or 1 in 200 years, are both popular, but other possible measures exist, see ?sec-Capital-Adequacy. As a first approximation, we may take it that \(a\) is sufficient to avoid insolvency altogether, i.e., in all events, all claims are paid.

Question 2, answered by the Pricing module, concerns how that asset amount \(a\) is to be split between premium \(P\) and capital \(Q\) (Equation 4.1); this is quite different from determining \(a\). It is about risk pricing or risk appetite. We must determine the expected margin insureds need to pay in total to make it worthwhile for investors to bear the portfolio’s risk. Such a rule we call a pricing risk measure and we may write premium as a functional \(P = \rho(X)\).

4.2 Bernoulli Risks and Their Pricing

posts/040-files/020-bernoulli.qmd

random variables · distributions

Bernoulli distributions are especially simple and this makes them a good starting place for pricing. This section starts by defining Bernoulli risks and revealing nuances between random variables and distributions. Then, it considers properties of Bernoulli pricing schedules. Throughout we work on a standard probability space \((\Omega, \mathcal F, \mathsf P)\) and identify \(\Omega=[0,1]\) as usual, Section 2.8. All random variables are real-valued functions defined on \(\Omega\).

Definition 4.1

- A Bernoulli random variable is one taking values only in \(\{0,1\}\). Specifically, a Bernoulli \(s\) r.v. takes the value \(1\) with probability \(s\).

- A Bernoulli risk is a class of Bernoulli random variables with the same distribution.

A Bernoulli \(s\) random variable can be represented as \(\{U\in A\}\) for any set \(A\) with \(\mathsf PA=s\), where \(U\) is a uniform random variable, Section 2.8. The notation uses our convention identifying a set with its indicator function \(1_{\{U < s\}}\). For example, we could take \(A=\{U < s\}\) or \(\{U>1-s\}\). Under the payoff convention, this is a risk that pays \(1\) with probability \(s\) and \(0\) otherwise. Under the loss convention \(\{U < s\}\) marks a unit loss with probability \(s\). Its complement, \(1 - \{U < s\} = \{U < s\}^c = \{U > 1-s\}\), describes a claim that pays \(1\) with probability \(1-s\).

Before thinking about pricing, we clarify why we work with Bernoulli risks rather than Bernoulli random variables. For insurance, it is the latter that matters: what counts is the distribution or law of the random variable. Pricing is invariant over all risks with the same law, explaining the law invariant terminology (CH2). This simplification rests on a critical assumption: individual risks are independent and there no underlying systemic factor drives outcomes. In financial contexts risks often depend on common underlying state variables, such as the market return, and law invariance is not appropriate. By contrast, non-financial insurance is, almost by definition, concerned with idiosyncratic risks that diversifies in large portfolios, making a law-invariant perspective is natural. Law invariance also aligns with the regulator or risk manager’s concern with probabilities of default and solvency rather than the evolution of market states. In this context a law invariant risk measure is sometimes called objective.

Since a Bernoulli \(s\) risk is completely determined by its parameter \(s\) it is reasonable to assume that its price as a security (equivalently, of insuring against the outcome \(1\)) is a function of \(s\). This supposition is bolstered by Borch (1962), who suggests that an additive pricing functional must be a function of the higher moments since all higher Bernoulli moments also equal \(s\).

Suppose now that we have a function giving the price \(g(s)\) of a Bernoulli \(s\) security. What properties should \(g\) possess to seem reasonable? Three seem incontrovertible:

- \(g(0)=0\) because a sure zero is worthless and \(g(1)=1\) because a sure payment of \(1\) is worth \(1\).

- The range of \(g\) is in \([0,1]\) because payoffs are non-negative and never exceed 1.

- \(g\) should be increasing, making it stochastically monotone: a more likely loss costs more to insure ?def-monotone.

Together, these three properties ensure that the graph of \(g\) lies within the unit square and rises monotonically from \((0,0)\) to \((1,1)\).

Remark 4.1 (Reminder: probability notation and terminology). Since \(\Omega=[0,1]\), a uniform random variable is naturally a function \(\Omega\to\Omega\). The random variable \(X=\{U\in A\}\) is the indicator function of the set \(\{\omega\in\Omega\mid U(\omega) \in A \}\). It takes values \[ X(\omega) = \begin{cases} 1 & U(\omega) \in A \\ 0 & U(\omega) \not\in A. \end{cases} \]

Remark 4.2 (Monotone vs. stochastically monotone). If \(X\le Y\) in all states then insuring \(Y\) should cost more than \(X\), the monotone condition. Since \(g\) is law invariant we can extend to stochastically monotone by replacing \(X\) (or \(Y\)) with another variable with the same distribution. For example, if \(X\) is Bernoulli \(s\) and \(Y\) Bernoulli \(t\) with \(s<t\), we can find \(A_s\subset A_t\) of probabilities \(s\) and \(t\) so \(X\) and \(Y\) have the same distributions as the indicators on \(A_s\) and \(A_t\) and \(A_t\) dominates \(A_s\) pointwise. Monotone prices for pointwise dominated risks is incontrovertible and thus it is natural \(g(t)\ge g(s)\) and that \(g\) is increasing.

Remark 4.3 (Relation to PIR terminology). In PIR the random variable representation is called explicit whereas the quantile form, specified by outcome, is called implicit. Converting to exceedance probability produces the dual implicit representation.

Remark 4.4 (Historical note). The idea for Bernoulli pricing schedules goes back to Choquet’s work on non-additive measures and the Choquet integral (1953), and it reappears across fields under many names: distortion risk measure and weighted VaR in insurance and finance; spectral risk measure in coherent risk theory; probability weighting and rank-dependent utility in decision theory; the Wang transform and related pricing maps in actuarial science. Despite the different labels, the template is the same: keep track only of the distribution (law-invariant), reshape probabilities through \(g\) to capture risk aversion or market frictions, and then value payoffs by integrating against that reshaped probability. With this lens, familiar constructions like bid/ask pairs, tail risk emphasis, and premium principles emerge as simple transforms of \(g\).

4.3 Distortion Functions

posts/040-files/030-distortions.qmd

point · point

This section defines a distortion function, examines their properties, gives several examples, and considers the economic interpretation of distortions and their transformations.

4.3.1 Definition of a Distortion Function

The definition of a distortion function reflects how a reasonable Bernoulli pricing function should behave, Section 4.2.

Definition 4.2 A function \(g:[0,1]\to[0,1]\) is called a distortion function if

- \(g(0)=0\) and \(g(1)=1\)

- \(g\) is increasing, \(s\le t\) implies \(g(s)\le g(t)\).

The value \(g(s)\) is interpreted as the ask price to write any Bernoulli security that pays \(1\) with probability \(s\), under the loss sign convention. In addition, if

- \(g\) is concave (resp. convex) Definition 2.24

we call \(g\) a concave (convex) distortion function.

In this section, we interpret the value \(g(s)\) as the ask price to write a Bernoulli \(s\) risk and extend \(g\) to a functional on random variables in Section 4.4.

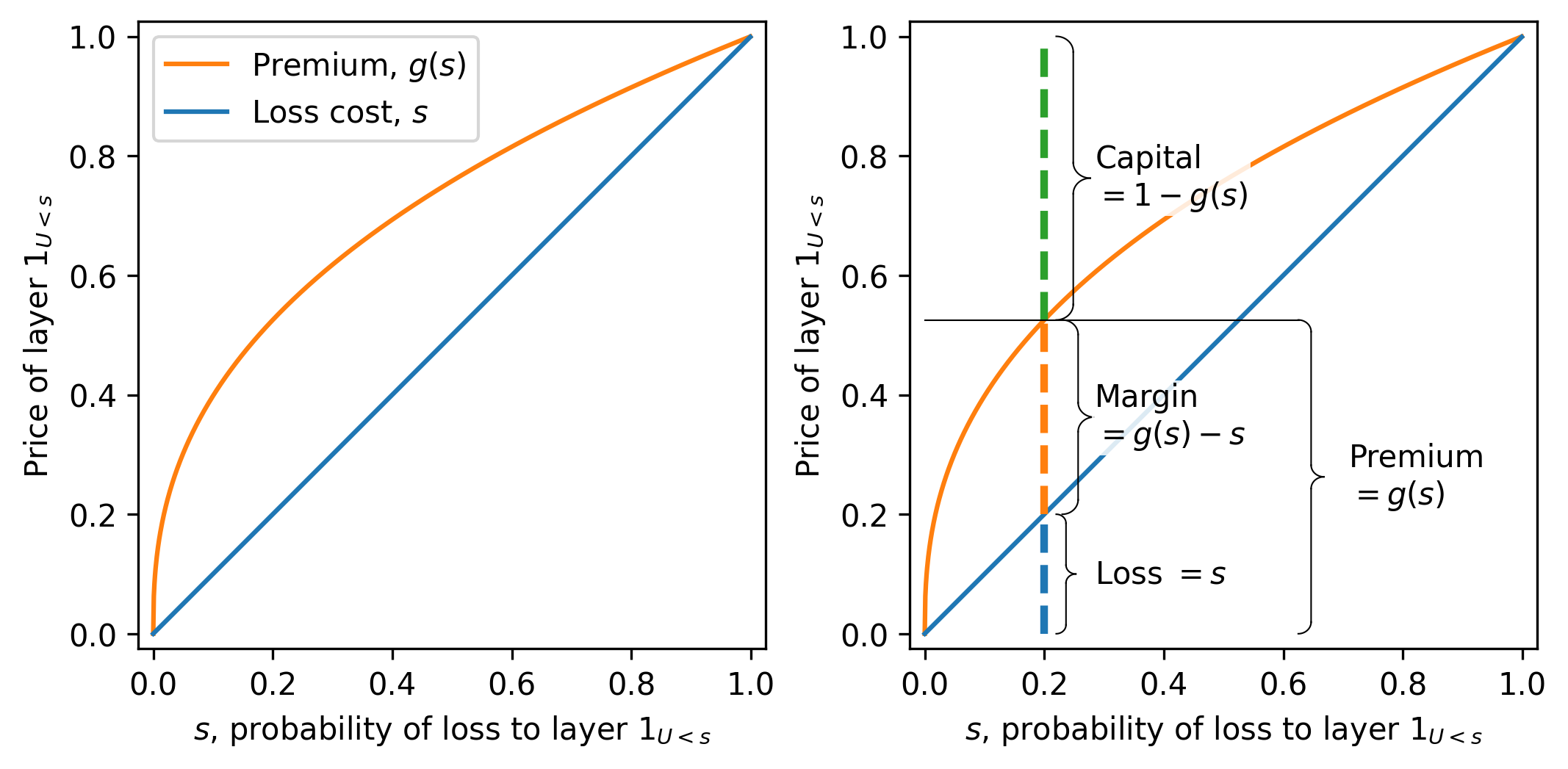

Figure 4.2 illustrates a typical concave distortion. The horizontal axis shows \(s\). Various insurance market statistics for the layer can be read off from \(g\). The expected loss equals the distance from the horizontal axis to the diagonal, the expected margin from the diagonal to the curve, and the capital from the curve to the top of the figure. The figure height equals 1, the outcome value of the Bernoulli layer in a loss state.

Condition (1) in the definition does two things. It codifies that certainty is free, and it ensures translation invariance. If we add a certain amount to a risk, its price should go up by exactly that amount. Without Condition (1) translation invariance fails. For example, suppose we used \(g(s)=0.1+0.8s\), so that \(g(0)=0.1\) and \(g(1)=0.9\). Then a sure zero is priced at \(0.1\) instead of \(0\), and a sure one is priced at \(0.9\) instead of \(1\). If we try to add one unit of certain payoff to the sure one, we expect the price to move from \(0.9\) to \(1.9\), but under this \(g\) there is no consistent way to represent or price the result. The failure at the endpoints breaks the link between adding certainties and adding their prices, which is why the requirements \(g(0)=0\) and \(g(1)=1\) are essential.

Condition (2) ensures more likely losses are more expensive.

Condition (3) implies pricing derived from \(g\) is subadditive, Definition 2.14. Further, conditions (2) and (3) imply the following important facts about concave distortions (see PIR 10.4 and 10.6 for details.).

- \(g\) is continuous everywhere except possibly at \(s=0\), where there can be a jump up to \(g(0+)\ge 0\).

- \(g\) is differentiable everywhere except for at most countably infinitely many points, where it can have kinks.

- \(g'(s)\ge 0\) where \(g'\) exists, since \(g\) is increasing.

- The left and right-hand derivatives of \(g\) exist everywhere on \((0,1)\), both are decreasing, and the right derivative is less than or equal to the left.

- \(g\) is twice differentiable almost everywhere, i.e., except for a possibly uncountable set of probability zero.

- Since \(g\) is concave, \(g''(s)\le 0\) where \(g''\) exists, in other words, \(g\) increases at a decreasing rate.

- If \(g\) is differentiable then it is concave iff \(g'\) is decreasing.

Finally, the interpretation of \(g(s)\) as the ask price to write any Bernoulli \(s\) means that \(g\) can be regarded as a law invariant functional on the set of Bernoulli random variables. Section 4.4 shows how to extend this latter interpretation to positive and general random variables.

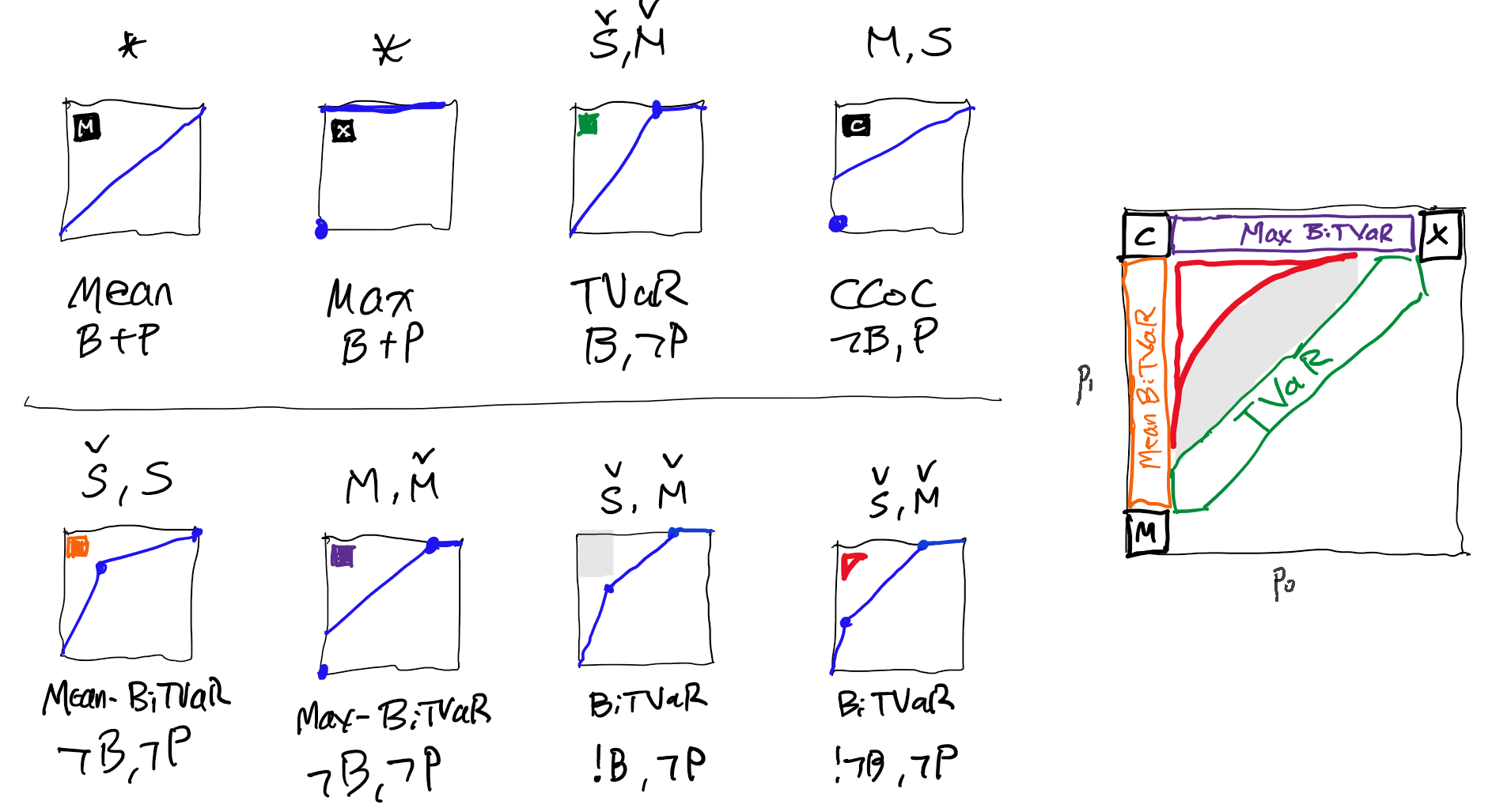

4.3.2 Five Representative Distortion Functions

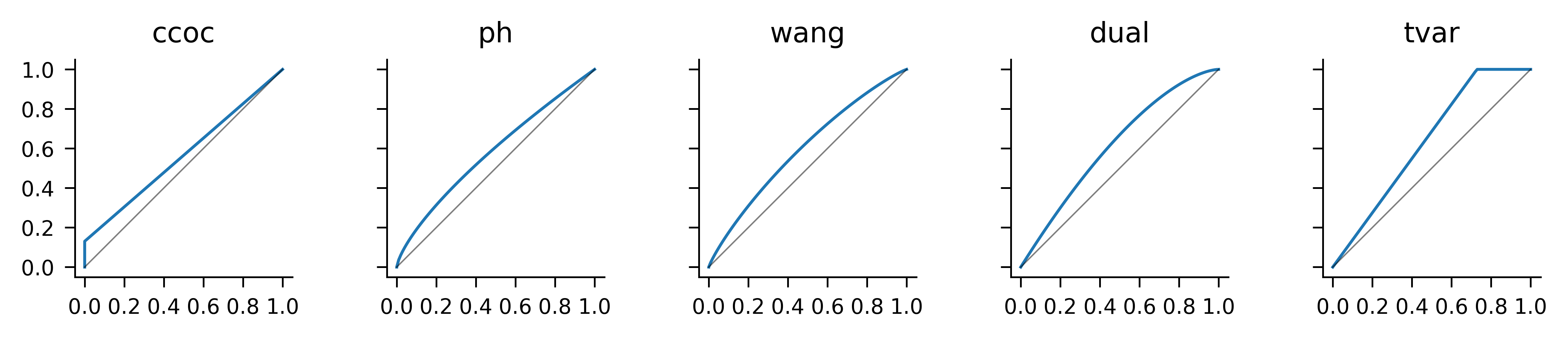

There are many parametric families of concave distortions in the literature, see PIR Ch 11.3 for a sampling. In practice, there are five families worth knowing well.

- Constant cost of capital (CCoC), \(g(0)=0\) and for \(s>0\), g(s) = s+$, where \(\nu+\delta=1\), \(\nu\ge 0\), and \(\delta \ge 0\). It is so named because it prices to a constant cost of capital equal to \(\delta/\rho\), Remark 4.5. It is more convenient to parameterize in terms of the discount rate \(\delta=r/(1+r)\) than the return \(r\), because discount ranges from \(0\) to \(1\) not \(0\) to \(\infty\).

- Proportional hazard (PH), \(g(s) = s^\alpha\), \(0 < \alpha \le 1\) so named because it act to increase the hazard rate (Dickson et al. 2015).

- Wang, \(g(s) = \Phi\left(\Phi^{-1}(s)+\lambda\right)\), \(\lambda \ge 0\), introduced in Wang (2000). \(\Phi\) is the standard Gaussian cumulative distribution function.

- Dual, \(g(s) = 1-(1-s)^m\), \(m\ge 1\).

- Tail Value at Risk (TVaR), \(g(s) = 1\wedge (s/(1-p))\) for \(0 \le p < 1\).

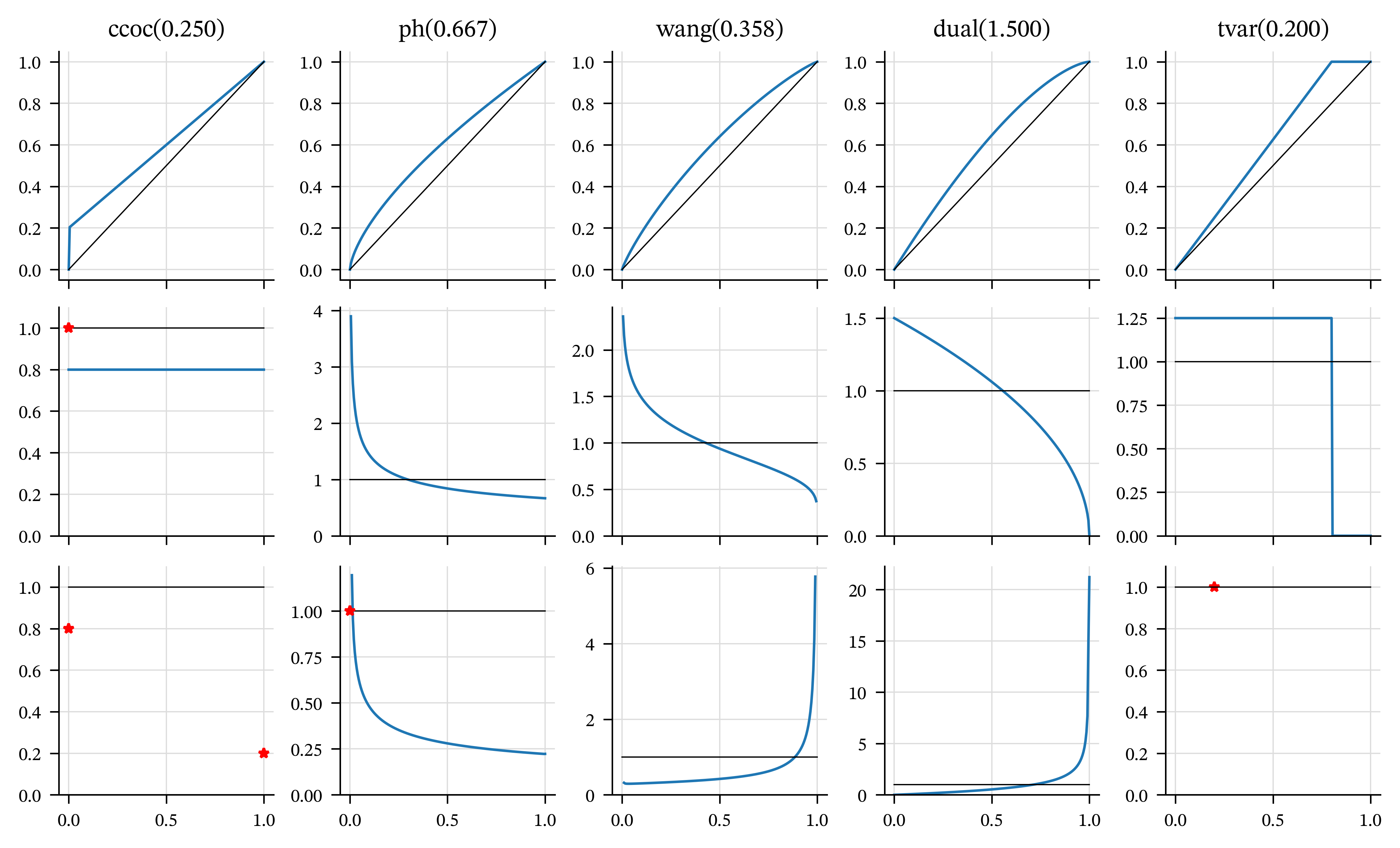

For fixed \(s\), the PH increases with decreasing \(\alpha\) and the other four increase with their parameter. Figure 4.3 plots examples of each, with broadly comparable parameters. The pictures are consistent with the various properties assumed and asserted above for distortions. Table 4.1 recaps the formulas for each \(g\) and shows the parameters used in the plots.

| Distortion | Formula | Parameter |

|---|---|---|

| CCoC | \(\nu s+\delta\) | \(\iota=0.1500\) |

| PH | \(s^\alpha\) | \(\alpha=0.7205\) |

| Wang | \(\Phi(\Phi^{-1}(s)+\lambda)\) | \(\lambda=0.3427\) |

| Dual | \(1-(1-s)^m\) | \(m=1.5951\) |

| TVaR | \(1\wedge s/(1-p)\) | \(p=0.2713\) |

Remark 4.5. The CCoC distortion prices a Bernoulli \(s\) risk to a constant cost of capital \(r:=\delta/\rho\) in the following sense. To credibly bear a Bernoulli risk requires assets \(a=1\). The insured pays \(g(s)\) leaving \(Q=1-g(s)=1-(\nu s +\delta)=\delta(1-s)\) funded by capital. The margin equals \(M=g(s)-s=\nu s +\delta - s =\delta(1-s)\). Therefore the return on capital is \(M/Q = \delta/\nu-r\). \(\quad\square\)

4.3.3 Concavity and Its Importance

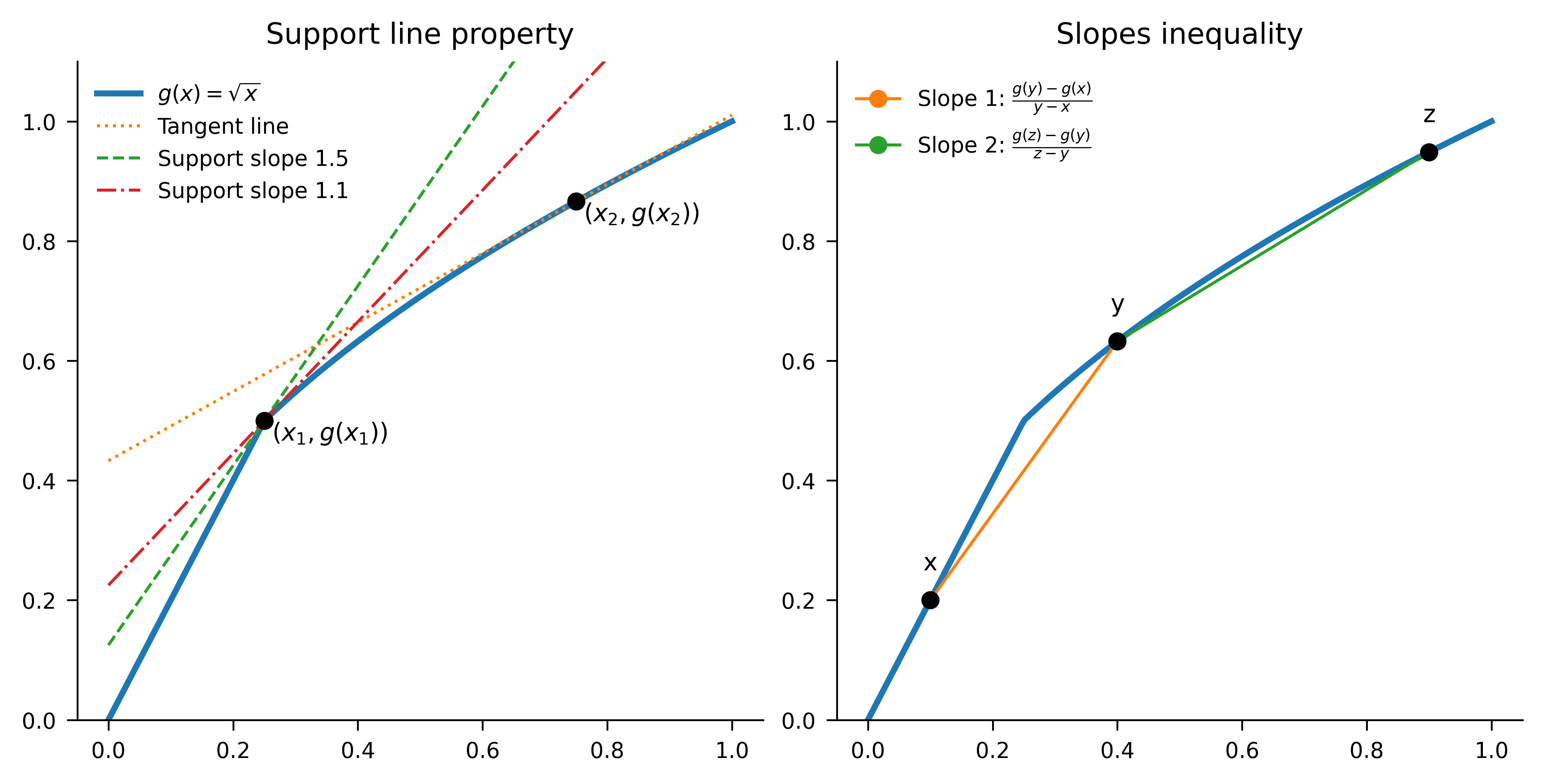

A function \(g\) is concave if for all \(x,y\in[0,1]\) and all \(0<\lambda <1\), \[ \lambda g(x)+(1-\lambda)g(y) \le g(\lambda x+(1-\lambda)y). \] Graphically, this condition means that every chord lies below the graph. Concavity is equivalent to the slopes inequality: for all \(0 \le x < y < z \le 1\), \[ \frac{g(y)-g(x)}{y-x} \ge \frac{g(z)-g(y)}{z-y}. \] That is, the secant slopes are non-increasing as you move right. The equivalence can be seen as follows.

- Concavity implies the slopes inequality: apply the definition to \(y\) as a convex combination of \(x\) and \(z\); rearrange to get the monotone-decreasing secant slopes.

- Slopes inequality implies concavity: fix \(x<y<z\) and write \(y=\lambda z+(1-\lambda)x\) with \(\lambda=(z-y)/(z-x)\). Compare the two secant slopes, substitute \(z-y=\lambda (z-x)\) and \(y-x=(1-\lambda)(z-x)\), cancel \(z-x>0\) to get \[ \frac{g(y)-g(x)}{1-\lambda} \ge \frac{g(z)-g(y)}{\lambda} \] and rearrange.

Concavity has a tangent line interpretation. If \(g\) is differentiable at \(x\), then for all \(y\in [0,1]\), \[ g(y) \le g(x)+g'(x)(y-x), \] i.e., the graph of \(g\) lies below its tangent line at every \(x\). If \(g\) is not differentiable, replace the tangent by any supporting line \(L\) at \(x\), that is, a line touching the graph of \(g\) from above. Then \(g\) is concave iff \(g\) lies at or below every support line at every point. Figure 4.4 illustrates these ideas a point where \(g\) is differentiable and one where it is not.

To see why the concavity of \(g\) is important, consider the function \(g(s)=s^2\) which is increasing, has \(g(0)=0\) and \(g(1)=1\), but is not concave (it is convex). Let’s look at pricing for the two random variables \(\{ U < 0.3\}\) and \(\{U > 0.7\}\), with \(U\) uniform. Both variables have price \(g(0.3)=0.09\). Because the two variables are defined with the same \(U\), a pool (sum) of the two has the same distribution as \(\{ U < 0.6\}\) and by law invariance has price \(g(0.6) = 0.36\). Thus, the price of the pool is greater than the sum of the prices of the parts, \(2\times 0.09 = 0.18\), contradicting diversification and violating subadditivity. This example shows subadditivity demands \[ g(s+t)\le g(s)+g(t)\qquad(s,t\ge 0,\ s+t\le 1), \] which follows from, but is weaker than, concavity.

Exercise 4.1 Confirm that pricing is subadditive for the PH \(\alpha=0.5\) distortion and the same two risks.

Solution 4.1. Each risk has price \(g(0.3) = 0.548\) and \[ g(0.6) = 0.775 < 2 \times 0.548 = 1.095. \] \(\square\)

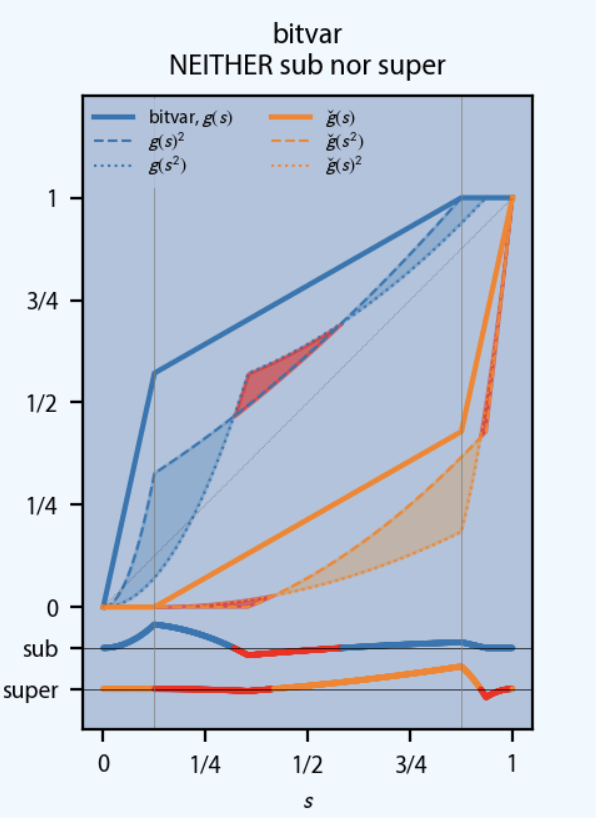

4.3.4 The Dual of a Distortion

By definition, \(g(s)\) is the ask price for a Bernoulli-\(s\) loss \(X\). We now derive the corresponding bid pricing function using a variation of the argument in Proposition 2.1. Define \(\check g(t)\) to be the bid price for a Bernoulli \(t\) loss.

Assume bid and ask prices come from the same internally consistent quoting rule. Suppose an insured buys the Bernoulli \(s\) loss \(X\) at the ask price \(g(s)\), and the insurer hedges (reinsures) by selling the complementary payoff \(1-X\). Since \(1-X\) is Bernoulli \(1-s\), the hedge earns \(\check g(1-s)\). Holding \(X\) and \(1-X\) produces the sure payoff 1, so its price is 1. No-arbitrage therefore implies \[ 1 = g(s) + \check g(1-s). \] Rearranging yields \[ \check g(s) = 1 - g(1-s). \] The bid price function \(\check g\) associated with \(g\) in this way is called the dual of \(g\) (not to be confused with the dual distortion).

Geometrically, the graph of \(\check g\) is obtained by a point reflection of the graph of \(g\) through \((1/2, 1/2)\); see (REF?). Therefore, \(\check g(0)=0\), \(\check g(1)=1\), and \(\check g\) is increasing. If \(g\) is concave, then \(\check g\) is convex. Taking the dual twice returns the original function: \(\check{\check g} = g\) since \(\check{\check g}(s)=1-\check g(1-s)=1-[1-g(1-(1-s))]=g(s)\).

4.3.5 Transformations of \(g\) and Their Economic Meaning

A distortion \(g:[0,1]\to[0,1]\) is increasing and satisfies \(g(0)=0\), \(g(1)=1\). There are four symmetries of the unit square that fix the diagonal from \((0,0)\) to \((1,1)\). They act on \(g\) as: \[ \begin{aligned} g(s) & \ && \text{(identity)}, \\ \check g(s) &:= 1 - g(1-s) && \text{(dual)}, \\ g^{-1}(t) &:= \inf \{s : g(s)\ge t\} && \text{(generalized inverse)}, \\ \hat g(s) &:= 1 - g^{-1}(1-s) = (\check g)^{-1}(s) && \text{(dual–generalized inverse)}. \end{aligned} \] Table 4.2 shows their action on the point \((s,g(s))\). The inverse and dual transformations swap concavity and convexity; the identity and dual-inverse both preserve concavity and convexity.

| Square symmetry | Transform | Point action | Induced | Concave/ex |

|---|---|---|---|---|

| identity | identity | \((s,g(s))\) | \(g\) | preserved |

| reflect in diagonal \(y=x\) | inverse | \((g(s),s)\) | \(g^{-1}\) | swapped |

| rotate \(180^\circ\) | dual | \((1-s,1-g(s))\) | \(\check g\) | swapped |

| reflect in anti-diagonal \(y=1-x\) | dual-inverse | \((1-g(s),1-s)\) | \(\hat g\) | preserved |

These four transformations form a commutative group isomorphic to the Klein four-group \(V\). Each element is an involution (has order 2). Useful identities include \[ \check{\check g}=g,\quad (g^{-1})^{-1}=g,\quad \hat{\hat g}=g,\quad \check{(g^{-1})}=\hat g. \]

SORT OUT.

The transformations have economic interpretations. We know \(g\) represents the ask price and \(\check g\) the bid price schedule for Bernoulli risks. The use of the remaining two is presented in REF.

Ask prices include a positive margin and therefore satisfy \(g(s)\ge s\). Bid prices include a negative margin and satisfy \(\check g(s)\le s\). Moreover, to ensure subadditivity (respectively superadditivity) of the induced pricing functional, \(g\) must be concave and \(\check g\) convex. The transformation given by rotation of the graph by \(180^\circ\), corresponding to \(g\leftrightarrow \check g\), preserves the boundary conditions while exchanging concavity and convexity, exactly as required. In particular, any increasing concave (respectively convex) function satisfying \(g(0)=0\) and \(g(1)=1\) necessarily lies above (respectively below) the diagonal and therefore embeds a positive (respectively negative) margin.

The dual-inverse \(\hat g\) admits a natural interpretation when pricing is expressed in the quantile domain. Writing a loss as \(X=q(p)\) for \(p\in[0,1]\), the distortion pricing functional can be written as an integral over distorted survival probabilities. Geometrically, this corresponds to evaluating the area under the curve \(x\mapsto g(S_X(x))\). Rotating this graph by \(180^\circ\) induces a new quantile function \(\hat q\) satisfying \(\hat q(u)=q(p)\) for the unique \(p\) such that \(1-u=g(1-p)\), that is, \(p=\hat g(u)\). Hence \[ \hat q(u)=q(\hat g(u)). \] The dual–inverse therefore acts by reparameterizing the quantile function rather than altering probabilities or outcomes: it combines the buyer–seller reversal (dual) with a change of probability scale (inverse). In this sense, \(\hat g\) represents the natural action of bid pricing directly in quantile space.

The dual-inverse transformation reveals a symmetry between the five representative distortions, see REF. It exchanges \[ \text{CCoC} \longleftrightarrow \text{TVaR}, \qquad \text{PH} \longleftrightarrow \text{Dual PH}, \] while the Wang transform is invariant.

Exercise 4.2 Confirm these exchanges.

Solution 4.2. The CCoC and TVaR symmetry is obvious from the picture. For the PH and dual, consider a point \((s, g(s))\) on the graph of PH \(g(s)=s^{1/d}\). Its reflected point is \[ (1-g(s), 1-s)= (1 - s^{1/d}, 1-s). \] Under the dual \(g(s)=1 - (1-s)^d\) this point maps to \[ \begin{aligned} 1 - s^{1/d} &\mapsto 1 - (1 - [1 - s^{1/d}])^d \\ &\mapsto 1 - (s^{1/d})^d \\ &\mapsto 1 - s \end{aligned} \] as required. To see the Wang is self-reflective, recall that \[ 1-\Phi(z)=1 - \mathsf P(Z \le z) = \mathsf P(Z> z) = \mathsf P(Z\le -z) = \Phi(-z) \] by the symmetries (and continuity) of the normal distribution. Then the point mirrored point \((1-g(s), 1-s)\) maps, under Wang, to \[ \begin{aligned} 1 - \Phi(\Phi^{-1}(s) + \lambda) &\mapsto \Phi[ \Phi^{-1}\{ 1 - \Phi(\Phi^{-1}(s) + \lambda)\} + \lambda ] \\ &\mapsto \Phi[ \Phi^{-1}\{ \Phi(-\Phi^{-1}(s) - \lambda) \} + \lambda ] \\ &\mapsto \Phi[ -\Phi^{-1}(s) - \lambda + \lambda ] \\ &\mapsto \Phi[ -\Phi^{-1}(s) ] \\ &\mapsto 1 - \Phi[ \Phi^{-1}(s) ] \\ &\mapsto 1 - s \end{aligned} \] as required. \(\square\)

4.3.6 TVaR as Extreme Points and the Kusuoka Correspondence

Before getting to details, here is a potted summary. New terms are defined as they are introduced below. All points in a convex set can be written as weighted sums of extreme points. The set of concave distortion functions and of measures on \([0,1]\) are both convex. TVaR distortions are extreme points (like corners) in former, and Dirac delta measures are extreme points in the latter. (The Dirac delta measure \(\delta_x\) put probability \(1\) on the single point \(x\).) The Kusuoka Correspondence \(\Psi\) is a map from the set of measures on \([0,1]\) to the set of concave distortion functions defined by \(\Psi(\delta_p)=\mathsf{TVaR}_p\) and then extending by linearity to all measures. Thus, \(\Psi\) is a dictionary between a distortion \(g\) and a probability measure on \([0,1]\) that gives a representation of \(g\) is a weighted sum of TVaRs. The rest of this subsection builds out the details of these ideas.

We start by recalling the standard definitions of convexity and extreme points in a vector space.

Definition 4.3 (Convex Sets and Extreme Points.) Let \(V\) be a vector space. A subset \(K \subseteq V\) is convex if the line segment connecting any two points in the set lies entirely within the set. That is, for all \(x, y \in K\) and \(\lambda \in [0,1]\): \[ \lambda x + (1-\lambda)y \in K. \]

An element \(e \in K\) is an extreme point if it cannot be decomposed as a non-trivial convex combination of other points in \(K\). Formally, \(e \in \mathsf{Ext}(K)\) if the equality \[ e = \lambda x + (1-\lambda)y \] with \(x, y \in K\) and \(\lambda \in (0,1)\) implies that \(x = y = e\).

Geometrically, extreme points correspond to the “corners” or “vertices” of the set. For example, in a triangle, the extreme points are the three vertices, and in a disk, the extreme points are its circular boundary. In the triangle, points on the edges are convex combinations of the endpoint vertices, and interior points are combinations of all three vertices. In the disk, points in the interior are combinations of boundary points.

Let \(\mathcal{M}\) be the set of Borel probability measures on \([0,1]\), and let \(\mathcal{D}_c\) be the set of concave distortion functions \(g: [0,1] \to [0,1]\) such that \(g(0)=0\), \(g(1)=1\), and \(g\) is concave. Lebesque measure on \([0,1]\) is denoted \(\mathsf P\).

Both \(\mathcal{M}\) and \(\mathcal{D}_c\) are convex spaces (weighted sums of a distortion is a distortion, weighted sum of probabilities is a probability) and their extreme points correspond.

By Aliprantis and Border (2006) Theorem 15.9, the extreme points of \(\mathcal{M}\) are precisely the Dirac measures: \[ \mathsf{Ext}(\mathcal{M}) = \set{ \delta_p : p \in [0,1] }. \]

The extreme points of \(\mathcal{D}_c\) are TVaR distortion kernel for \(p \in [0,1)\) as: \[ \mathsf{tvar}_p(t) = 1 \wedge \frac{t}{1-p} = \begin{cases} \dfrac{t}{1-p} & 0 \le t < 1-p \\ 1 & 1-p \le t \le 1. \end{cases} \] In the limiting case, \(\mathsf{tvar}_1(t)=\set{t>0}\). We can see this using a geometric proof as follows. Consider \(\mathsf{tvar}_p\) and \(t\) in two regions.

- For \(t \in [1-p, 1]\) (the flat region), \(\mathsf{tvar}_p(t)=1\). If \(\mathsf{tvar}_p = \lambda h_1 + (1-\lambda)h_2\) for concave distortions \(h_1, h_2\), then \(h_1(t)=h_2(t)=1\) on this interval, as 1 is the upper bound of any distortion.

- For \(t \in [0, 1-p]\) (the linear region), \(\mathsf{tvar}_p(t)\) is the chord connecting \((0,0)\) to \((1-p, 1)\). By concavity, any distortion \(h\) with \(h(1-p)=1\) must satisfy \(h(t) \ge \mathsf{tvar}_p(t)\) on this interval.

- Since for \(\mathsf{tvar}_p\) the weighted average equals the lower bound, we must have \(h_1(t) = h_2(t) = \mathsf{tvar}_p(t)\) everywhere.

Thus, \(\mathsf{tvar}_p\) cannot be decomposed.

Proposition 4.1 (The Kusuoka Correspondence) There exists a linear bijection \(\Psi: \mathcal{M} \to \mathcal{D}_c\) defined by: \[ g(t) = \Psi(\mu)(t) = \int_{[0,1]} \mathsf{tvar}_p(t) \, \mu(dp). \]

Linearity follows from linearity of the integral with respect to the measure.

Lemma 4.1 (Mapping of Extreme Points) Let \(\delta_q \in \mathcal{M}\) be the Dirac measure concentrated at \(q \in [0,1)\). Then \(\Psi(\delta_q) = \mathsf{tvar}_q\).

Proof. By the defining property of the Dirac measure, for any bounded measurable function \(f\), \(\int f(p) \, \delta_q(dp) = f(q)\). Thus, substituting \(\mu = \delta_q\) in the definition of \(\Psi\), gives \[ \Psi(\delta_q)(t) = \int \mathsf{tvar}_p(t)\delta_q(dp) = \mathsf{tvar}_q(t) \] the TVaR distortion kernel. \(\square\)

Since \(\Psi\) is linear, it preserves convex structure. Thus we can also deduce that \(\mathsf{tvar}\) are extreme from the fact \(\delta_p\) are extreme.

4.3.7 The Spectrum of a Distortion

Let \(g(t) = \int_{[0,1)} \mathsf{tvar}_p(t) \, \mu(dp)\) be a typical distortion. Differentiating with respect to \(t\) yields the spectral function (WHERE DEFINED?): \[ \begin{aligned} g'(t) &= \frac{d}{dt} \int_{[0,1)} 1\wedge \frac{t}{1-p} \, \mu(dp) \\ &= \int_{[0,1)} \frac{1}{1-p} \set{t < 1-p} \, \mu(dp) \\ &= \int_{[0, 1-t)} \frac{1}{1-p} \, \mu(dp). \end{aligned} \]

Remark 4.9. The integral is restricted to \([0,1)\) because the term corresponding to \(p=1\) is \(\mathsf{tvar}_1(t) = \set{t>0}\). On the open interval \((0,1)\), this function is constant (equal to 1), and thus its derivative is zero. Excluding \(p=1\) also avoids the singularity at \(\dfrac{1}{1-p}\).

To align this with standard spectral representations, we perform a change of variables. Let \(s = 1-p\) represent the significance level (or tail probability). This transformation maps the confidence level \(p \in [0, 1-t)\) to the tail region \(s \in (t, 1]\).

Let \(\nu\) be the image measure of \(\mu\) under the map \(T(p) = 1-p\). That is, for any Borel set \(A\), \(\nu(A) = \mu\set{ p : 1-p \in A }\). (If \(\mu\) has a density \(f\), then \(\nu\) has density \(h(s)=f(1-s)\); standard change of variables.) Substituting \(s\) for \(1-p\) in the integral gives \[ g'(t) = \int_{(t,1]} \frac{1}{s} \, \nu(ds). \] If \(\mu\) has an atom at \(p=1\), \(g\) has a jump at \(t=0\), and the derivative contains a Dirac delta component. This expression now matches the spectral weight construction in Föllmer and Schied (2016), Prop 4.69. The weight \(\phi(t) := g'(1-t)\) at quantile level \(t\) accumulates the weights \(1/s\) for all components active in the tail (where the significance level \(s > t\)). We call \(\nu\) the TVaR-weight measure. See Simon (2011) Theorem 1.29 for a related result.

The previous derivation constructs \(g\) from a known measure. However, in practice, we often start with a desired risk profile \(g\) and need to determine its constituent TVaR weights. This inverse problem highlights the second dynamic in our circle of equivalences: the TVaR-weight measure \(\nu\) is proportional to the curvature of the distortion.

Since \(g'(t)\) is an integral over \((t, 1]\), the Fundamental Theorem of Calculus (generalized to measures) implies that the measure \(\nu\) is related to the negative derivative of \(g'\) \[ dg'(t) = -\frac{1}{t} \, \nu(dt). \] Rearranging this relates the mixing measure directly to the second distributional derivative of \(g\): \[ \nu(dt) = -t \, dg'(t). \tag{4.2}\] Since \(g\) is concave, \(g'\) is decreasing, so \(dg'\) is a negative measure. Thus \(\nu\) is a positive measure.

Equation 4.2 offers a powerful heuristic: highly curved regions of the distortion function correspond to heavy weighting of the TVaR parameters in that region. A pure TVaR is the extreme case: all the “curvature” at one point!

A subtle but important feature of this relationship arises at the endpoint \(t=1\). The standard Expected Value principle corresponds to \(\mathsf{TVaR}_0\), or \(s=1\). Does a given distortion \(g\) place any weight on the simple average?

We can detect this by inspecting the terminal slope \(g'(1)\). From the spectral integral: \[ \lim_{t \to 1} g'(t) = \nu(\{1\}). \] Because \(g\) is concave and \(g(t) \ge t\), the slope \(g'(1)\) is always between 0 and 1.

- If \(g'(1) = 0\): The measure places no weight on the mean. The risk measure is entirely driven by tail events (e.g., \(\mathsf{TVaR}_{0.99}\)).

- If \(g'(1) > 0\): The measure includes a discrete atom at \(s=1\) (the mean) with weight exactly equal to this final slope.

Example 4.1 (The Wang \(\alpha=0.5\) Distortion.) Consider the Wang distortion \(g(t) = \sqrt{t}\). This function is concave, distorting probabilities to be larger than they are (\(g(t) > t\)). The terminal slope is \(g'(t) = \dfrac{1}{2\sqrt{t}}\), so \(g'(1) = 0.5\). This immediately tells us that 50% of the risk measure is simply the expected value (\(\mathsf{TVaR}_0\)). The curvature is \(g''(t) = -\dfrac{1}{4} t^{-3/2}\). Using the curvature formula, the continuous density is \(\nu(dt) = -t [-\dfrac{1}{4} t^{-3/2}] dt = \dfrac{1}{4\sqrt{t}} dt\). Integrating this density over \([0,1]\) yields \(\int_0^1 \dfrac{1}{4\sqrt{t}} dt = 0.5\). As a result the spectral measure \(\nu\) consists of a continuous density \(\dfrac{1}{4\sqrt{t}}\) summing to 0.5, plus a Dirac mass of 0.5 at \(s=1\). The distortion is an equal mix of the mean and a curvature component.

Example 4.2 (Spectral function and TVaR-weight measures for the five representative distortions.)

| Name | \(g(t)\) | \(g'(1-t)\) spectral function | \(\mu(dp)\) TVaR weight measure |

|---|---|---|---|

| CCoC | \((\delta + \nu t)\set{t>0}\) | \(\delta\set{t=1} + \nu\set{t<1}\) | \(\delta\set{p=1} + \nu\set{p=0}\) |

| PH | \(t^a\) | \(a(1-t)^{a-1}\) | \(a(1-a)(1-p)^{a-1} \, dt + a\set{p=0}\) |

| Wang | \(\Phi(\Phi^{-1}(t) + \lambda)\) | \(e^{\lambda \Phi^{-1}(t) - \lambda^2/2}\) | \(-(1-p)g''(1-p) \, dp\) |

| Dual | \(1 - (1-t)^b\) | \(b t^{b-1}\) | \(b(b-1)(1-p)t^{b-2} \, dp\) |

| TVaR | \(1\wedge \dfrac{t}{1-p}\) | \(\dfrac{1}{1-p} \set{t > p}\) | \(\delta_{p}\) |

Figure 4.5 uses consistent parameter \(p\), introduced in Example 4.7. The first row shows \(g\), the second the spectral function \(g'(1-t)\), and the third the TVaR-weight measure \(-tg''(t)\). In the first two rows small values of \(t\) correspond to large losses. In the third row, \(t=0\) corresponds to weighting the mean, and \(t=1\) to weighting the maximum. Red stars indicate probability masses on particular points. \(\quad\square\)

See Mildenhall and Major (2022) 10.9 for more examples computing \(\mu\) and BLOG-POST for more details.

4.4 Spectral Risk Measures

posts/040-files/040-srms.qmd

This [spectral risk measure] class is very wide and, in our opinion, is sufficient for any practical application of coherent risks. (Cherny and Orlov 2011)

point · point

In this section we show there is a one-to-one correspondence between spectral risk measures (Definition 2.22) and concave distortion functions. The correspondence is essentially forced by the axioms. The idea is as follows. If \(\rho\) is a SRM, then it is law invariant, comonotonic additive, and coherent, which in turn makes it monotone, translation invariant, positive homogeneous, and subadditive (see definitions in Section 2.9). Starting from \(\rho\) we can use each of these six properties to solve a different piece of the puzzle:

- Use law invariance to define a distortion by \(g(s)=\rho(A)\) for any set \(A\) of measure \(s\).

- Use comonotonic additivity, positive homogeneity and monotone to extend \(g\) to positive random variables using the layer-cake representation.

- Use translation invariance to extend to all random variables by writing \(X=(k+X) - k\) for \(k\) large enough that \(k+X\) is positive.

- Use subadditivity of \(\rho\) to show that \(g\) is a concave distortion. This step requires \(\Omega\) be atomless.

Conversely, starting with \(g\), we:

- Define a law invariant functional for positive \(X\) by \[ \rho(X)=\int_0^\infty g(\mathsf P(X>x))\,dx \tag{4.3}\] and extending to all \(X\) with the \((X+k)-k\) trick.

- Use standard properties of integrals to show that \(\rho\) is positive homogeneous and translation invariant.

- Use the fact that quantiles are linear in comonotonic variables \(q_{X+Y}=q_X+q_Y\), and that an increasing function commutes with taking quantiles \(f\circ q_X = q_{f\circ X}\) to show that \(\rho\) is comonotonic additive.

- Use law invariance and the pointwise monotonicity of integrals to show that \(\rho\) is monotone.

- Use the concavity of \(g\) to show \(\rho\) is subadditive.

The rest of this section fleshes out these ideas. We present the derivation in detail because it is informative to see how each assumptions is used to drive the conclusions, and because it extends PIR to all random variables, not just positive ones. We start by recalling the survival function expression for the mean and explaining the layer-cake representation.

Exercise 4.3 (Functional notation extends function notation.) This exercise shows that the function and proposed functional notation for \(g\) are consistent. Let \(X\) be a Bernoulli \(s\) random variable. Show that \[ g(s) = \int_0^\infty g(\mathsf P(X>x))\,dx. \]

Solution 4.3. By definition of a Bernoulli risk, \[ \mathsf P(X>x) =\begin{cases} 0 & x<=0 \\ s & 0 < x < 1 \\ 0 & x \ge 1 \end{cases} \] and therefore \[ g(\mathsf P(X>x)) =\begin{cases} 1 & x<=0 \\ g(s) & 0 < x < 1 \\ 0 & x \ge 1. \end{cases} \] The result follows. \(\quad\square\)

Exercise 4.4 (The CCoC distortion) Show that Equation 4.3 for a CCoC distortion \(g\) applied to bounded, positive \(X\) equals \[ g(X) = \nu \mathsf P(X) + \delta \max X. \]

Solution 4.4. Let \(m=\max X\) be the upper bound of \(X\). Then, using Equation 4.4 \[ \begin{aligned} \int_0^\infty g(\mathsf P(X>x))\,dx \int_0^\infty g(\mathsf P(X>x))\,dx &=\int_0^\infty (\nu S(x) + \delta)\set{X\le m}\,dx &=\mathsf P(X) + \delta m. \end{aligned} \] It is important that \(g(0)=0\) and that \(X\) is bounded in order for the integral to exist. \(\quad\square\)

4.4.1 The Survival Function Expression for the Mean

Actuaries are familiar with the survival function expression for the mean of a positive integrable random variable \[ \mathsf PX = \int_0^\infty S(x)\,dx \tag{4.4}\] To see why it holds for all integrable \(X\) use integration-by-parts, integrating \(dF\) to \(F\) for \(X<0\) and to \(-S\) for \(X\ge 0\): \[ \begin{aligned} \mathsf PX &= \int_{-\infty}^{\infty} x\,dF_X(x) \\ &= \int_{-\infty}^0 x\,dF_X(x) + \int_0^{\infty} x\,dF_X(x) \\ &= \left(xF(x)\Big\vert_{-\infty}^0 -\int_{-\infty}^0 F_X(x)dx\right) + \left( - xS(x)\Big\vert_0^{\infty} + \int_0^{\infty} S_X(x)\,dx\right) \\ &= -\int_{-\infty}^0 F_X(x)\,dx + \int_0^{\infty} S_X(x)\,dx. \end{aligned} \] The last line relies on \(F(-\infty)=S(\infty)=0\).

4.4.2 The Layer-Cake Representation for Positive Random Variables

The layer-cake representation of \(X\ge 0\) writes it as the limit of a sum of comonotonic indicator functions. It is an idea introduced to actuaries by Gary Venter.

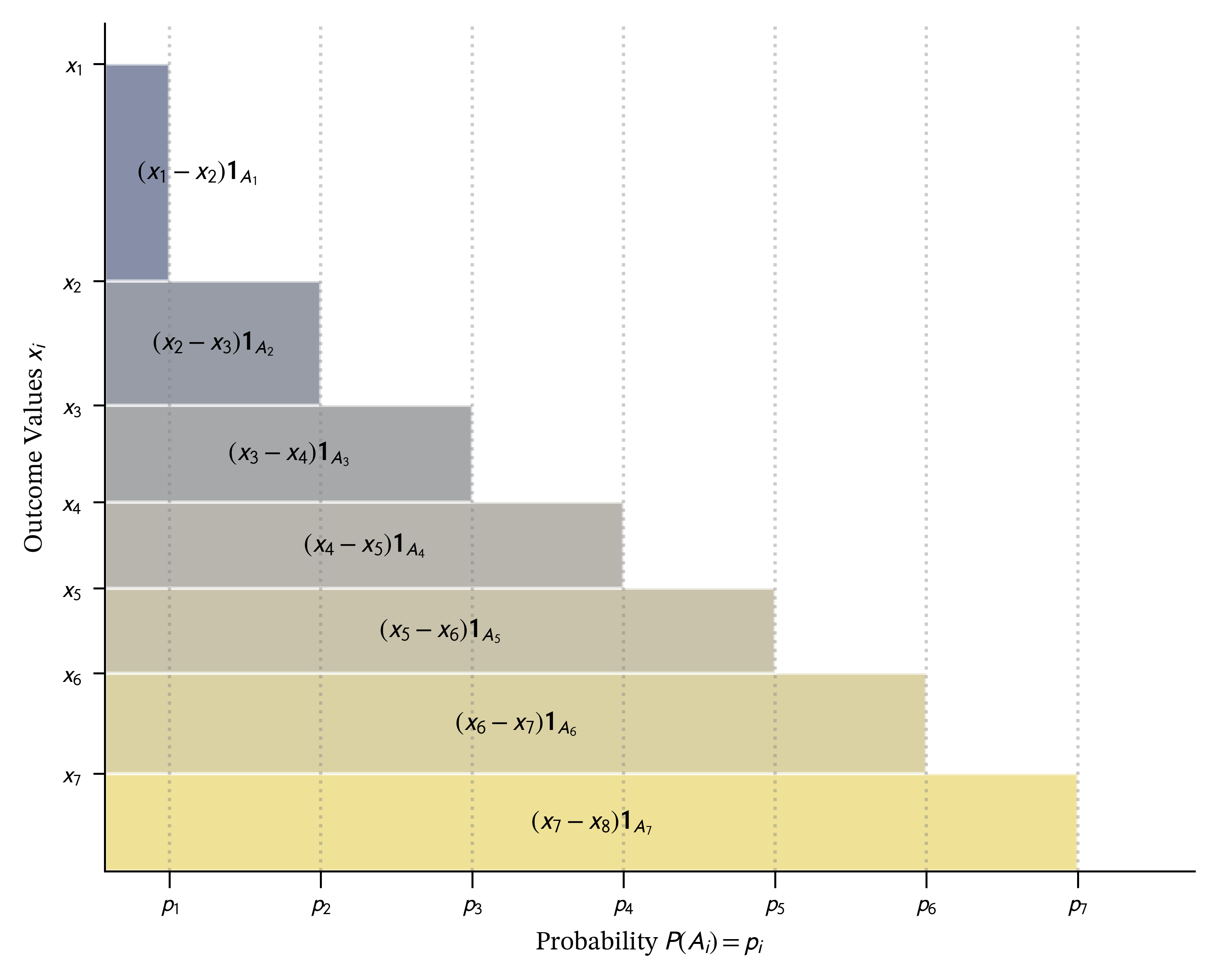

Start with a discrete random variable \(X\) taking distinct positive values \(x_1 > x_2 > \dots > x_n > 0\). We can explicitly create a sequence of nested sets \(A_k := \set{\omega \mid X(\omega) \ge x_k}\), which ensures \(A_1 \subset A_2 \subset \dots \subset A_n\). The risk \(X\) can then be reconstructed using its “layer-cake” decomposition as a sum of scaled indicator functions on these sets: \(X = \sum_{i=1}^n (x_i - x_{i+1}) A_i\), where we define \(x_{n+1}=0\), as illustrated in Figure 4.6. Because the sets \(A_i\) are nested, their indicator functions are comonotonic. (Remember, a set is identified with its indicator function.) The diagram looks like a special case, where \(X\) is neatly arranged to be decreasing. However, by Ryff’s theorem, we can just re-arrange the sample space to ensure that happens Föllmer and Schied (2016).

We can approximate a general \(X \ge 0\) using a sequence of simple functions: \[ X_n = \sum_{k=1}^{N_n} \alpha_{n,k} \mathbf{1}_{\{X > x_{n,k}\}} \tag{4.5}\] where \(X_n \uparrow X\) almost surely. By the monotone convergence theorem we can therefore write \[ X = \int_0^\infty \set{X > x}\,dx \] where the integrands are indicator functions \[ \set{X > x}(\omega)=\begin{cases} 1 & X(\omega) > x \\ 0 & \text{otherwise}. \end{cases} \]

4.4.3 Capacities and the Choquet Integral

The relationship between SRMs and distortion functions relies on two new ideas, a capacity and the Choquet integral.

Definition 4.4 A capacity \(c\) is a normalized, monotone set function \[ c(\emptyset)=0,\quad c(\Omega)=1,\quad A\subseteq B\Rightarrow c(A)\le c(B). \]

Given a distortion function \(g\), \[ A\mapsto g(\mathsf P(A)) \] defines a capacity, which we write \(c=g\,\mathsf P\). Not all capacities are of this form, but these are the only ones we consider. Unlike probabilities, capacities are may not be additive.

Definition 4.5 The Choquet integral of a random variable \(X\) with respect to a capacity \(c\) is \[ \int X\,dc = -\int_{-\infty}^0 [1 - c(\mathsf P(X > x))]\,dx + -\int_{-\infty}^0 c(\mathsf P(X > x))\,dx. \]

The Choquet integral lets the weight of an event depend on an ordering of the sample space by values of \(X\). When \(c\) comes from a concave \(g\)

- Bad states, the right tail \(X\ge 0\), count more than their raw probability because \(g(s)\ge s\).

- Good states, the left tail \(X<0\), count less because \(\check g(s)\le s\) (since \(\check g\) is convex).

The next proposition confirms that Pollard’s notation works as expected for the TVaR distortion kernels. Define the usual TVaR (or expected shortfall) functional as \[ \mathsf{TVaR}_p(X) := \frac{1}{1-p}\int_p^1 q(s)\,ds \tag{4.6}\] where \(q(p) := \inf \{x : S_X(x) \le 1-p\}\) is the lower \(p\) quantile function of \(X\).

Proposition 4.2 (TVaR Functional.) The functional induced by the extreme TVaR distortion kernel \(\mathsf{tvar}_p(s):=1\wedge s/(1-p)\) is the TVaR functional \(\mathsf{TVaR}_p\).

Proof. We need to show the functional defined by \(\mathsf{tvar}_p(X)\) equals TVaR defined by Equation 4.6. This follows using the definition, notational trick, and Fubini’s theorem: \[ \begin{aligned} \mathsf{tvar}_p(X) &= \int_0^\infty \mathsf{tvar}_p(S_X(x))\, dx \\ &= \int_0^\infty 1 \wedge \frac{S_X(x)}{1-p}\, dx \\ &= \int_0^\infty \frac{1}{1-p} \int_p^1 \set{F(x)<t}\,dt\,dx \\ &= \int_0^\infty \frac{1}{1-p} \int_0^1 \set{t \ge p} \set{F(x)<t}\,dt\,dx \\ &= \int_0^1 \frac{\set{t \ge p} }{1-p} \int_0^\infty \set{F(x)<t}\,dx\,dt \\ &= \int_p^1 \frac{1}{1-p} \int_0^\infty \set{F(x)<t}\,dx\,dt \\ &= \frac{1}{1-p} \int_p^1 q(t)\,dt. \end{aligned} \] In detail, as a function of \(x\) and \(p\), we have: \[ \begin{aligned} \frac{1}{1-p} \int_p^1 \set{F(x)<t}\,dt &= \begin{cases} \dfrac{1 - F(x)}{1-p} & p \le F(x),\ x\ge q(p) \\ 1 & p > F(x),\ x < q(p). \end{cases} \end{aligned} \]

4.4.4 Six Representations of the Choquet Integrals

In this section we give six different integral representations of the Choquet integral.

Theorem 4.1 Let \(g \in \mathcal{D}_c\) be continuous distortion with associated measure \(\mu\) and spectral derivative \(g'\), and define the capacity \(c(A):=g(\mathsf P(A))\) and \(G_X(x):=1-g(S_X(x))\). Then, the following representations are equivalent: \[ \begin{aligned} g(X) &\stackrel{(a)}{=} \int_{[0,1)} \mathsf{TVaR}_p(X) \, \mu(dp) \\ &\stackrel{(b)}{=} \int_0^1 q_X(t) g'(1-t) \, dt \\ &\stackrel{(c)}{=} \int_0^1 q_X(\hat{g}(s)) \, ds \\ &\stackrel{(d)}{=} \int_0^1 G_X^{-1}(t) \, dt \\ &\stackrel{(e)}{=} \int_0^\infty g(S_X(x)) \, dx \\ &\stackrel{(f)}{=} \int X \, dc \end{aligned} \]

Proof. Throughout, \(X\ge 0\) is integrable, \(S_X(x)=\mathsf P(X>x)\), and \(q_X\) is the (left-continuous) quantile function. Because \(g\) is concave and increasing, it is absolutely continuous on compact subintervals of \((0,1)\), has a right-derivative \(g'_+\) a.e., and the Lebesgue–Stieltjes measure \(dg\) decomposes as \(dg = g'_+(u)\,du + dg_s\). To keep notation light, we write \(g'(u)\) for \(g'_+(u)\) and, when \(g\) has a singular part, interpret identities involving \(g'(u)\,du\) as the corresponding Stieltjes identities (replace \(g'(1-t)\,dt\) by \(d(g(1-t))\)). Under absolute continuity, the displayed formulas hold literally.

(b) \(\iff\) (e): Choquet/survival to spectral. For \(x\ge 0\), \[ g(S_X(x))=\int_0^{S_X(x)} g'(u)\,du =\int_0^1 \set{u<S_X(x)}\,g'(u)\,du. \] Insert into (e) and apply Tonelli/Fubini: \[ \int_0^\infty g(S_X(x))\,dx =\int_0^1 g'(u)\int_0^\infty \set{u<S_X(x)}\,dx\,du. \] Now \(u<S_X(x)\) is equivalent to \(P(X>x)>u\), i.e. \(F_X(x)<1-u\). Since \(X\ge 0\), \[ \int_0^\infty \set{F_X(x)<s}\,dx = q_X(s),\qquad s\in(0,1), \] because \(\{x\ge 0:F_X(x)<s\}=[0,q_X(s))\). With \(s=1-u\) this gives \[ \int_0^\infty \set{u<S_X(x)}\,dx = q_X(1-u), \] hence \[ \int_0^\infty g(S_X(x))\,dx =\int_0^1 g'(u)\,q_X(1-u)\,du =\int_0^1 q_X(t)\,g'(1-t)\,dt, \] after the substitution \(t=1-u\). This is (b).

(a) \(\iff\) (b): mixture to spectral. From the distortion-mixture representation \[ g(t)=\int_{[0,1)} \mathsf{tvar}_p(t)\,\mu(dp), \qquad \mathsf{tvar}_p(t)= 1\wedge \frac{t}{1-p}, \] differentiate under the integral (valid a.e.) to obtain, for a.e. \(u\in(0,1)\), \[ g'(u)=\int_{[0,u)} \frac{1}{1-p}\,\mu(dp). \] Substitute into (b) and apply Fubini: \[ \begin{aligned} \int_0^1 q_X(t)\,g'(1-t)\,dt &=\int_0^1 q_X(t)\int_{[0,1-t)}\frac{1}{1-p}\,\mu(dp)\,dt \\ &=\int_{[0,1)}\frac{1}{1-p}\int_0^{1-p} q_X(t)\,dt\mu(dp). \end{aligned} \] Finally, rewrite the inner integral as \(\int_p^1 q_X(s)\,ds\) (substitute \(s=t+p\)), giving \[ \begin{aligned} \int_0^1 q_X(t)\,g'(1-t)\,dt &=\int_{[0,1)} \frac{1}{1-p}\int_p^1 q_X(s)\,ds\mu(dp) \\ &=\int_{[0,1)} \mathsf{TVaR}_p(X)\,\mu(dp), \end{aligned} \] which is (a).

(b) \(\iff\) (c): change of variables via the dual–inverse. Let \(\check g(t)=1-g(1-t)\) and let \(\hat g\) be its upper inverse: \[ \hat g(s)=\sup\set{t\in[0,1]:\check g(t)\le s}. \] Then \(\check g(\hat g(s))=s\) for a.e. \(s\), and where \(\check g\) is differentiable we have \[ \frac{d}{dt}\check g(t)=g'(1-t). \] Using the substitution \(s=\check g(t)\) (equivalently \(t=\hat g(s)\)) yields \[ \int_0^1 q_X(\hat g(s))\,ds =\int_0^1 q_X(t)\,d\check g(t) =\int_0^1 q_X(t)\,g'(1-t)\,dt, \] with the middle expression understood as a Stieltjes integral when \(\check g\) is not absolutely continuous. This is (b). Compare Föllmer and Schied (2016) Corollary 4.87.

(d) \(\iff\) (e): expectation under the distorted distribution. Let \(Y\) have distribution function \(G_X\). Then \[ \int_0^1 G_X^{-1}(t)\,dt = \mathsf{P}Y. \] By the tail-sum formula for \(Y\ge 0\), \[ \begin{aligned} \mathsf{P}Y &=\int_0^\infty P(Y>x)\,dx \\ &=\int_0^\infty (1-G_X(x))\,dx \\ &=\int_0^\infty g(S_X(x))\,dx, \end{aligned} \] which is (e). Compare Föllmer and Schied (2016) Proposition 4.86.

(f) \(\iff\) (e): Choquet notation. For the capacity \(c(A)=g(P(A))\), the Choquet integral of \(X\ge 0\) is defined by \[ \begin{aligned} \int X\,dc := \int_0^\infty c(\{X>x\})\,dx \\ &=\int_0^\infty g(P(X>x))\,dx \\ &=\int_0^\infty g(S_X(x))\,dx. \end{aligned} \] This is exactly (e), written as (f). \(\quad\square\)

Remark 4.7. Since \(g\) is concave it is differentiable almost everywhere, the points where it isn’t can be ignored in formula (b).

4.4.5 The Spectral Representation Theorem I

In this section we present the first of two representation theorems for spectral measures. This version draws the connection between a SRM and a distortion function. The second is presented in Section 4.4.7.

Theorem 4.2 (Spectral Representation Theorem.) Let \(\rho\) be defined on the space of bounded random variables on an atomless probability space \(\Omega\).

- \(\rho\) is a loss spectral risk measure if and only if there is a concave distortion function \(g\) such that \[ \rho(X) = -\int_{-\infty}^0 \check g(F_X(x))\,dx + \int_0^\infty g(S_X(x))\,dx \tag{4.7}\]

- \(V\) is a payoff spectral valuation function if and only if there is a unique convex distortion function \(\check g\) such that \[ V(Y) = -\int_{-\infty}^0 g(F_Y(x))\,dx + \int_0^\infty \check g(S_Y(y))\,dy \] where \(g\) is the dual of \(\check g\).

In both cases, the distortion is unique.

Before addressing the proof, it is helpful to interpret equation Equation 4.7 for a random variable \(X\) that can take both positive and negative values.

We work throughout under the loss sign convention and from the insurer’s perspective. Positive values of \(X\) represent payments the insurer must make; negative values represent receipts. To separate these effects, we use the Jordan decomposition, which writes any bounded random variable \(X\) as the difference of two non-negative parts: \[ X^+ := \max(X,0), \qquad X^- := \max(-X,0)=-\min(X, 0) \ge 0, \] so that \[ X = X^+ - X^-. \] We regard \(X^+\) as a pure loss and \(X^-\) as a pure gain. Many insurance contracts are pure losses, while many financing instruments are pure gains, though contracts such as futures or forwards may involve both components depending on how consideration is treated.

Both \(X^+\) and \(-X^-\) are non-decreasing functions of \(X\) and are therefore comonotonic. Since a spectral pricing functional is comonotonic additive, we can price the bundled position by summing the prices of its parts: \[ g(X) = g(X^+) + g(-X^-). \] This expression represents the net ask price of the contract from the insurer’s perspective.

Since \(X^+ \ge 0\), its price is the standard Choquet integral, \[ g(X^+) = \int_0^\infty g\bigl(S_X(x)\bigr)\,dx, \] which is the second term in Equation 4.7. It corresponds to the loaded cost of the potential payments the insurer must make and carries a positive margin: \(g(X^+) \ge \mathsf P(X^+)\).

The term \(-X^-\) represents receipts. By bid-ask duality, its ask price satisfies \[ g(-X^-) = -\check g(X^-), \] where \(\check g\) is the bid functional dual to \(g\). Since \(X^- \ge 0\), using Exercise 4.5 shows \[ \check g(X^-) = \int_0^\infty \check g\bigl(S_{X^-}(x)\bigr)\,dx = \int_{-\infty}^0 \check g\bigl(F_X(x)\bigr)\,dx. \tag{4.8}\] Thus the price of the gain component becomes \[ g(-X^-) = -\int_{-\infty}^0 \check g\bigl(F_X(x)\bigr)\,dx, \] which is the first term in Equation 4.7. It reflects the discounted credit the insurer gives for potential receipts; equivalently, it embeds a negative margin, since \(\check g(X^-) \le \mathsf P(X^-)\).

Putting the pieces together, \[ g(X) = \mathrm{ask}(X^+) - \mathrm{bid}(X^-) = \int_0^\infty g(S_X)\,dx - \int_{-\infty}^0 \check g(F_X)\,dx, \] exactly as in the spectral representation formula.

Economically, this decomposition makes clear how a loss spectral risk measure prices mixed-sign positions. The insurer surcharges what it may have to pay and discounts what it may receive. In practice, most insurance operations involve pure losses sold at a positive margin, while financing activities involve pure gains offered at an attractive (negative-margin) price to investors. These functions are typically handled by separate parts of the firm—underwriting and corporate finance—but equation Equation 4.7 shows how they combine coherently within a single pricing functional.

The interpretation for a payoff variable \(Y\) under the bid functional \(\check g\) is analogous: bid for \(Y^+\) minus ask for \(Y^-\). In both cases, the same principle applies: surcharge what is paid and discount what is received.

Exercise 4.5 Verify Equation 4.8.

Solution 4.5. This is a matter of chasing the definitions. \(S_{X^-}(x) = \mathsf P(X^- > x)=\mathsf P(-\min(X,0) > x)=\mathsf P(\min(X,0) < -x)=F_{-X^-}(-x)\) and so \(\check g(S_{X^-}(x))=\check g(F_{-X^-}(x))\). The result follows by substitution \(x\leftarrow -x\) in the first integral in Equation 4.8. \(\quad\square\)

Proof. The proof is in several steps. We start by assuming the loss sign convention and work on claim (1).

Part A. From SRM to distortion

Let \(\rho\) be a loss SRM.

Step A.1. Identify the distortion. Define \(g(s)=\rho(A)\) where \(A\) is any set with probability measure \(P(A)=s\). This is well-defined due to the law invariance of \(\rho\).

Step A.2. Show \(g\) is a distortion. SRMs are normalized, so \(g(0)=0\) and \(g(1)=1\), and monotone, so \(g\) is increasing.

Step A.3. Show \(g\) is a concave distortion. To show \(g\) is concave it is enough to show that \(g(y)\ge (g(x)+g(z))/2\) for all \(0\le x\le z\le 1\), \(y=(x+z)/2\), (Föllmer and Schied 2016, A.1.1). In an atomless space there is enormous flexibility to carve \(\Omega\) into disjoint pieces with arbitrary prescribed probabilities, usually with the help of a uniform variable \(U\). In particular, set \(A=\set{0\le U\le y}\), \(B=\set{z-y \le U \le z}\), so that \(\mathsf P(A) = \mathsf P(B) = y\), \(\mathsf P(A\cap B) = x\) and \(\mathsf P(A\cup B) = z\) (draw a picture). The two indicator functions \(A\cup B\) and \(A\cap B\) are comonotonic, and the sums of indicator functions \(A\cup B + A\cap B = A+B\). Therefore, \[ \begin{aligned} g(x) + g(z) &= \rho(A\cup B) + \rho(A\cap B) \\ &= \rho(A\cup B + A\cap B) \\ &= \rho(A + B) \\ &\le \rho(A) + \rho(B) \\ &= 2g(y) \end{aligned} \] by comonotonic additivity and subadditivity. Thus \(g\) is concave.

Step A.4. Layer Cake Representation for \(X \ge 0\). Applying comonotonic additivity, positive homogeneity, and law invariance to the discrete layer-cake approximation Equation 4.5 gives \[ \begin{aligned} \rho(X) &= \rho\left( \lim_n\sum_i^{N_n} (x_{n,i} - x_{n,i+1})\set{X>x_{n,i}} \right) \\ &= \lim_n \sum_i \rho( (x_{n,i} - x_{n,i+1}) \set{X>x_{n,i}} ) \\ &= \lim_n \sum_i (x_{n,i} - x_{n,i+1}) \rho(\set{X>x_{n,i}}) \\ &= \lim_n \sum_i (x_{n,i} - x_{i+1}) g(s_{n,i}) \end{aligned} \] where \(s_{n,i}=\mathsf P(X>x_{n,i})=S_X(x_{n,i})\). We can swap the limit and function because \(\rho\) is Lipschitz continuous Lemma 2.1. In the limit, the last line becomes the Riemann sum expression for the integral \[ \rho(X) = \int_0^\infty g(\mathsf P(X>x)) \, dx \tag{4.9}\] showing \(\rho(X)\) is the Choquet integral of \(X\) with respect to the capacity \(g \mathsf P\).

Step A.5. Extension to general \(X\). For a general \(X\), write \(X = (k+X) - k\) where \(k=\min X\). Then, apply Step 4 and use translation invariance of \(\rho\) and substitution in the integral: \[ \begin{aligned} \rho(X) &= \rho(k+X - k) \\ &= \rho(k+X) - k \\ &= \int_0^\infty g(\mathsf P(k + X > x)) \, dx - k \\ &= \int_0^\infty g(\mathsf P(X > x - k)) \, dx - k \\ &= \int_{-k}^\infty g(\mathsf P(X > x)) \, dx - k \\ &= -\int_{-k}^0 [1 - g(\mathsf P(X > x))] \, dx + \int_0^\infty g(\mathsf P(X > x)) \, dx \\ &= -\int_{-k}^0 \check g(F_X(x)) \, dx + \int_0^\infty g(S_X(x)) \, dx. \end{aligned} \] We can extend the limit on the left hand integral to \(-\infty\) because the integrand is zero, giving \[ \rho(X) = -\int_{-\infty}^0\check g(F_X(x)) \, dx + \int_0^\infty g(S_X(x)) \, dx \] as required.

Step A.6. Uniqueness. If \(h\) is another distortion satisfying Equation 4.7 then by Exercise 4.3 is must agree with \(g\).

Part B. From distortion to SRM

Conversely, start with a concave distortion function \(g\) and define a functional \(\rho\) by Equation 4.7, the Choquet integral of \(X\) with respect to the law invariant capacity \(c(A):=g(\mathsf P(A))\).

Step B.1. The Choquet integral is monotone, translation invariant, and positive homogeneous. Monotone follows because if \(X\ge Y\) then \(\set{Y>x}\subset \set{X>x}\) and \(g\) is increasing. Translation invariance follows from the same integral substitution used in step A.5. If \(k \ge 0\), then by substitution \[ \int_0^\infty g(\mathsf P(kX>x))\, dx = k\int_0^\infty g(\mathsf P(X>x))\, dx \] and similarly for \(X<0\), showing positive homogeneity.

Step B.2. The Choquet integral is comonotonic additive. Let \(q_X\) be the quantile function of \(X\) and let \(f\) be an increasing function. Then \(q_{g(X)}=g(q_X)\) (Föllmer and Schied 2016 Appendix A). If \(X\) and \(Y\) are comonotonic, then \(q_{X+Y}=q_X + q_Y\). Comonotonic additivity now follows from Theorem 4.1.

Step B.3. If \(g\) is concave then \(c\) is submodular. Given sets \(A\) and \(B\), there are three disjoint sets \[ A\cap B,\quad A\setminus B,\quad B\setminus A. \] To prove \(c\) is submodular we must show \[ g(A\cup B)+g(A\cap B)\le g(A)+g(B) \] or, re-arranging, that \[ g(A\cup B)- g(B)\le g(A) - g(A\cap B). \tag{4.10}\] The two sides of this inequality are different views of the size of \(A\setminus B\). Since \(\mathsf P\) is additive, it sees the two as the same size: \(\mathsf P(A\setminus B) = \mathsf P(A) - \mathsf P(A\cap B) = \mathsf P(A\cup B) - \mathsf (B)\). Dividing both sides of Equation 4.10 by this common value reduces us to showing \[ \frac{g(A\cup B)+g(A\cap B)}{\mathsf P(A\cup B) - \mathsf (B)} \le \frac{g(A) - g(A\cap B)}{\mathsf P(A) - \mathsf P(A\cap B)}. \tag{4.11}\] But Equation 4.11 is exactly the slopes inequality, which holds if and only if \(g\) is concave.

Step B.4. If \(c\) is submodular then \(\rho\) is subadditive. Since \(\rho\) is Lipschitz continuous, it is enough to prove this for random variables taking finitely many values. Write \(X=\sum_i x_iA_i\) and \(Y=\sum_i y_iA_i\) and order the indices so that \(x_1+y_1\le \cdots \le x_n+y_n\). Then the \(\mathsf Q\) measure from Lemma 4.2 has \[ \int X+Y\,dc = \mathsf Q(X+Y) = \mathsf Q(X) +\mathsf Q(Y) \le \int X\,dc +\int Y\,dc. \] This is exactly the impact of diversification: the order of the parts may differ from the order of the sum!

The payoff form follows by applying the loss form to \(-V(-X)\). Let \(V\) be a payoff SVF. Then \(\rho(X)=-V(-X)\) is a loss SRM and so there is a \(g\) associated with \(\rho\), Equation 4.7. For a payoff variable \(Y\) be a payoff variable, we have \[ \begin{aligned} V(Y) &= -\rho(-Y) \\ &= -\left( -\int_{-\infty}^0 \check gF_{-Y} +\int_0^\infty gS_{-Y} \right) \\ &= \int_{-\infty}^0 \check g\mathsf P(-Y\le y)\,dy - \int_0^\infty g\mathsf P(-Y>y)\,dy \\ &= \int_{-\infty}^0 \check g\mathsf P(Y\ge -y)\,dy - \int_0^\infty g\mathsf P(Y<-y)\,dy \\ &= \int_{\infty}^0 \check g\mathsf P(Y\ge x)\,(-dx) - \int_0^{-\infty} g\mathsf P(Y<x)\,(-dx) \\ &= - \int_{-\infty}^0 gF_Y + \int_0^{\infty} \check gS_Y, \end{aligned} \] i.e., we swap the roles of \(g\) and \(\check g\) in Equation 4.7.

The proof relies on the following technical lemma, which is a simplification of Föllmer and Schied (2016) Lemma 4.98, tailored to our application where we know the capacity comes from a distortion.

Lemma 4.2 The \(A_1,\dots,A_n\) be a partition of \(\Omega\) into disjoint measurable sets and let \(c\) be a normalized monotone submodular set function associated with the concave distortion \(g\). Let \(\mathsf Q\) be the measure on the sigma algebra \(\mathcal G\) generated by \(A_i\) with \[ \mathsf Q(A_k) = c(B_k) - c(B_{k-1}),\qquad B_0:=\emptyset\text{ and } B_k=\bigcup_{j=1}^k A_j,\ k\ge 1. \] Then \(\int X\,dc\ge\mathsf Q(X)\) for all \(\mathcal G\) measurable \(X=\sum_i x_iA_i\), and equality holds if the values of \(X\) are arranged in decreasing order \(x_1\ge \cdots \ge x_n\).

Proof. First, we show equality for decreasing order. Assume the values of \(X\) are ordered such that \(x_1 \ge x_2 \ge \dots \ge x_n\). By definition, the Choquet integral is given by: \[ \int X \, dc = \sum_{i=1}^n (x_i - x_{i+1}) c(\{X > x_{i+1}\}) \] (with \(x_{n+1}=0\)). Since \(X\) is sorted, the level sets correspond to the cumulative unions \(B_i\): \[ \{X > x_{i+1}\} = A_1 \cup \dots \cup A_i =: B_i. \] Substituting this into the definition and using the telescoping sum yields: \[ \begin{aligned} \int X \, dc &= \sum_{i=1}^n x_i [c(B_i) - c(B_{i-1})] \\ &= \sum_{i=1}^n x_i \mathsf Q(A_i) \\ &= \mathsf Q(X). \end{aligned} \]

Second, we show the inequality for arbitrary orders. Let \(Z\) denote the Radon-Nikodym derivative \(\dfrac{d\mathsf Q}{d\mathsf P}\). On the partition \(A_k\), it is given by the discrete density: \[ Z_k = \frac{\mathsf Q(A_k)}{\mathsf P(A_k)} = \frac{g(\mathsf P(B_k)) - g(\mathsf P(B_{k-1}))}{\mathsf P(B_k) - \mathsf P(B_{k-1})}. \] Geometrically, \(Z_k\) represents the slope of the secant line of \(g\) over the interval \([\mathsf P(B_{k-1}), \mathsf P(B_k)]\). Since \(g\) is concave, it satisfies the slopes inequality, the secant slopes are decreasing: \[ Z_1 \ge Z_2 \ge \dots \ge Z_n. \] Define a random variable \(Z\) by \(Z(\omega)=Z_k\) if \(\omega\in A_k\). Then, \[ \mathsf Q(X) = \mathsf P\left[ X \frac{d\mathsf Q}{d\mathsf P} \right] = \sum_{i=1}^n x_i Z_i \mathsf P(A_i). \] By the Hardy-Littlewood-Pólya rearrangement inequality, this weighted sum is maximized when \(X\) and \(Z\) are comonotonic (similarly ordered). Since \(Z\) is decreasing, the sum is maximized when \(X\) is also decreasing (\(x_1 \ge \dots \ge x_n\)). Thus, the Choquet integral, which corresponds to the expectation under the measure aligned with the decreasing order of \(X\), is an upper bound: \[ \int X \, dc \ge \mathsf Q(X). \]

Notation. We write \(g(X)\) for functional associated with a distortion function \(g\). If \(g\) is concave it is a SRM and if convex a SVF.

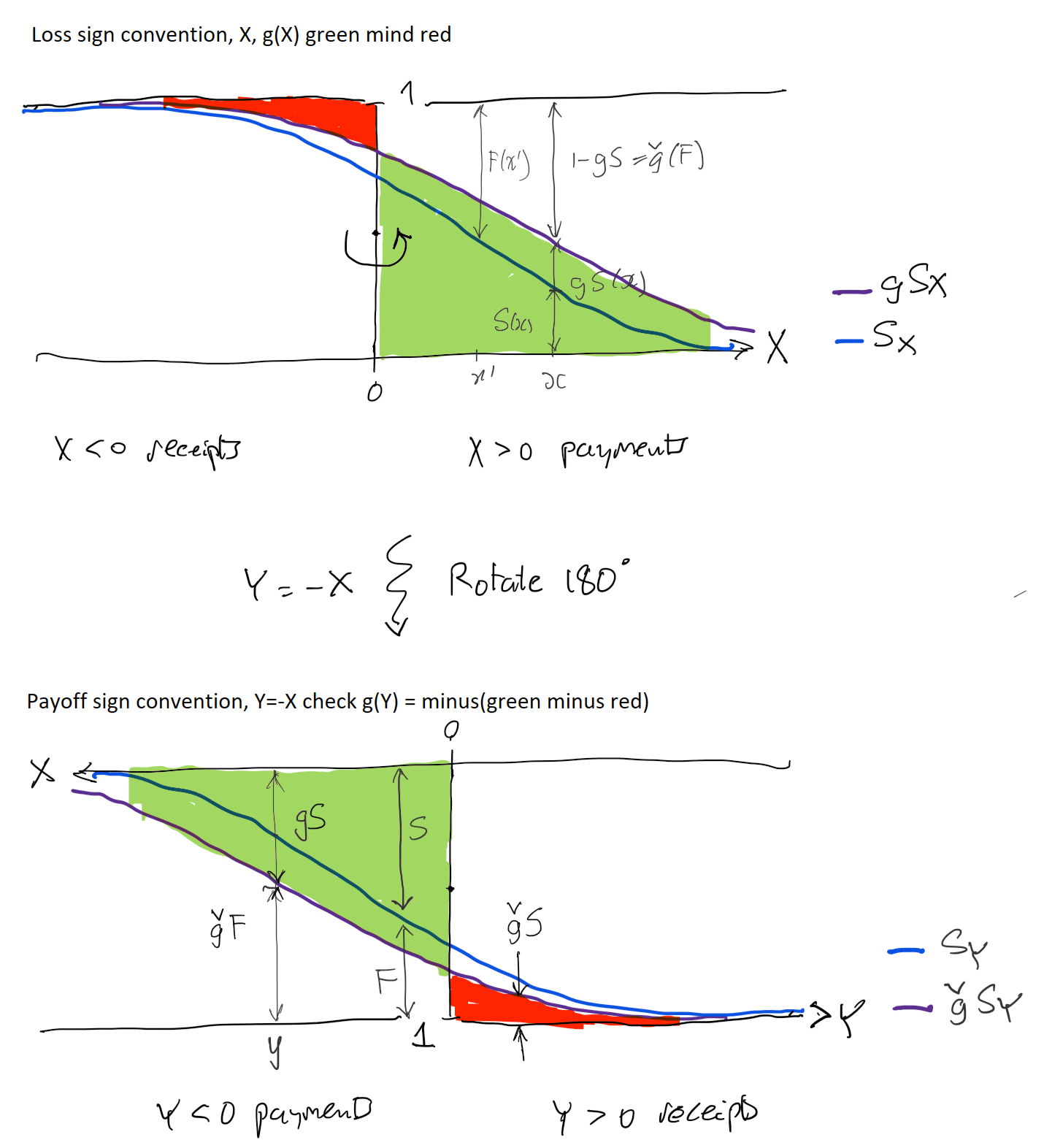

Figure 4.7 illustrates the two calculations, showing that one is transformed into the other by rotating by 180 degrees about the point \((0, 0.5)\), just as a similar rotation about \((0.5, 0.5)\) takes \(g\) to \(\check g\)! It also shows how (top panel) loss payments (positive) have their survival probabilities increased from \(s\) to \(g(s)>s\), “thickening the tail”, and receipts (negative) have their exceedance probabilities decreased, whereas on the right the opposite holds true. Remember \(\check g\) is convex and lies below the diagonal, so \(\check g(s)\le s\).

Remark 4.8 (Historical note). The representation of monotone functionals as integrals with respect to nonadditive set functions originates in Choquet’s capacity theory and the Choquet integral (Choquet 1954) Dellacherie’s monograph on capacities and stochastic processes systematizes the measure-theoretic foundations that later become standard in probability (Dellacherie 1972). Schmeidler then supplies the decisive axiomatic step: comonotonic additivity (plus mild regularity) is exactly what forces a Choquet-integral representation (Schmeidler 1986). In parallel, Yaari’s dual theory reframes the same mathematics as probability distortion rather than utility curvature (Yaari 1987), a viewpoint that enters actuarial pricing via Wang’s distortion premiums and related operators Wang (2000). In mathematical finance, coherence is axiomatized by Artzner, Delbaen, Eber, and Heath in the famous paper Artzner et al. (1999), and Acerbi (2002) identifies the law-invariant, coherent, comonotonic-additive subclass as spectral risk measures (weighted-quantile functionals with an increasing weight profile). Kusuoka’s representation theorem then describes the full law-invariant coherent class (on atomless spaces) as a supremum over a family of AVaR/TVaR-mixtures (equivalently, a supremum over a family of spectra): the comonotonic-additive case corresponds to a single spectrum (hence a unique distortion in your theorem), whereas genuinely non-comonotonic coherent functionals require a nontrivial supremum set (hence, in particular, the “max of at least two SRMs” phenomenon) (Kusuoka 2001). CHECK.

Remark 4.9. The careful reader will notice some possible sloppiness in in the definitions of \(F_Y\) and \(S_Y\) with regards to less than vs. less than or equal. This results from the definition of value at risk for payoff variables as the negative of the upper quantile, whereas for loss variables it is the lower quantile. CHECK. Marinacci and Montrucchio (2003) shows that the Choquet integral can be define using \(g(\mathsf P(X>x))\) or \(g(\mathsf P(X>x))\), because the integrands are equal there \(g\) is continuous and, as an increasing function, it can have only countably many jumps, which then do not affect the integral.

Example 4.3 This example shows that Theorem 4.2 is not true for a probability space with atoms. It demonstrates that on probability spaces with atoms of unequal probability, law invariance is too weak to enforce the concavity of the distortion function. It constructs a risk measure \(\rho\) that is law invariant, comonotonic additive, and coherent, yet that cannot be represented as a mixture of TVaRs. Kusuoka’s representation theorem fails because the atomic structure of the space prevents the construction of a consistent concave distortion.

Consider a simple probability space with two elementary events (atoms) that have unequal probabilities: \[ \Omega = \{\omega_1, \omega_2\}, \quad P(\{\omega_1\}) = 0.1, \quad P(\{\omega_2\}) = 0.9. \] Define the risk measure \(\rho\) as the expectation under a different probability measure \(Q\), specifically, the uniform measure on these two atoms: \[ \rho(X) = Q(X) = 0.5 X(\omega_1) + 0.5 X(\omega_2). \]

\(\rho\) is coherent, because it is linear, which implies subadditivity and positive homogeneity. It is comonotonic additive: linear operators are additive for all variables. And it is law invariant: on \(\Omega\) two random variables can only have the same distribution if they map the same values to the same atoms. Thus, \(X \sim Y \implies X = Y\) and law invariance holds automatically because no distinct \(X\) and \(Y\) share a distribution.

If Theorem 4.2 holds, there would be a concave distortion function \(g\) such that \(\rho(X) = \int X \, d(g P)\). Let’s derive the necessary shape of \(g\) using indicator functions (Bernoulli variables). If \(X = {\{\omega_1\}}\), a loss of 1 with probability 0.1, then \(\rho(X) = 0.5(1) + 0.5(0) = 0.5\) and so \(\rho(X) = g(P(\omega_1)) = g(0.1) = 0.5\). Similarly, if \(Y = {\{\omega_2\}}\) is a loss of 1 with probability 0.9 then \(\rho(Y) = 0.5(0) + 0.5(1) = 0.5 = g(0.9)\). We also know \(g(0)=0\) and \(g(1)=1\). Thus we have points on the distortion curve \[ (0,0) \to (0.1, 0.5) \to (0.9, 0.5) \to (1,1). \] But, the slope \(0 \to 0.1\) equals \((0.5-0)/0.1 = 5\), the slope \(0.1 \to 0.9\) equals \((0.5-0.5)/0.8 = 0\) and the slope \(0.9 \to 1\) equals \((1-0.5)/0.1 = 5\). This indicates a convex-concave (wobble) shape, showing \(g\) cannot be concave. \(\quad\square\)

Remark 4.10. Each of the six properties of \(\rho\) has a essential role in fixing its representation in terms of a distortion function, Table 4.4. Likewise each of the four properties of \(g\) is essential and they are combined with properties of integrals, Table 4.5.

| Property of \(\rho\) | Why Essential |

|---|---|

| Law invariant | Allows \(g(s)=\rho(A)\) |

| Comonotonic additive | Layer cake representation for sum of indicators |

| Positive homogeneity | Scaled layer cake |

| Translation invariant | Extend to negative \((X+k) - k\) |

| Monotone | Continuity for layer cake limit |

| Subadditivity | Implies \(g\) is convex via submodular capacity |

| Property of \(g\) | Why Essential |

|---|---|

| \(g(0)=0\), \(g(1)=1\) | \(c=g\mathsf P\) is normalized. |

| Increasing | \(c\) is monotone |

| Convex | \(c\) submodular and hence \(\rho\) is subadditive |

Example 4.4 (Subadditive and concave functions.) The subadditivity condition on distortion functions \(g(s+t) \le g(s) + g(t)\) is weaker than concavity. For example, the function: \[ g(x) = \begin{cases} 2x & \text{if } x \in [0, 1/4] \\ 1/2 & \text{if } x \in [1/4, 1/2] \\ x & \text{if } x \in [1/2, 1] \end{cases} \] is a subadditive pricing function (check cases based on the intervals for \(s\) and \(t\)). But it is not concave: its slope increases at \(s=1/4\). Thus the associated pricing functional should fail to be subadditive. Indeed, apply it to \(\{U < 0.5\}\) and \(\{ 0.25 < U < 0.75\}\). Each has price \(g(0.5) = 0.5\). But the sum has the same distribution as the sum of the comonotonic variables \(\{U > 0.25\}\) and \(\{U > 0.75\}\), which by comonotonic additivity has value \(0.75 + 0.5 = 1.25\). Thus, \(g\) is not subadditive as a functional.

Example 4.5 (Spreadsheet Pricing Discrete Choquet Integral.) This example gives a spreadsheet-like computation of \(g(X)\) for a discrete random variable taking finitely many positive values \(x_i\) with probabilities \(p_i\), applying the Choquet integral definition and using Lemma 4.2. The steps are:

- Sort by \(x_i\) in increasing order. Aggregate ties and sum probabilities.

- Compute the survival function at each atom \(i\): \(S_i=\sum_{j>i} p_j\) (set \(S_0=1,\ S_{n}=0\)).

- Compute the risk-adjusted “probabilities” (they sum to 1): \[ g p_i := g(S_{i-1})-g(S_i)\ \ \ (\ge 0). \]

- Mean and price are sum-products: \[ \mathsf P(X)=\sum_i x_i p_i,\qquad g(X)=\sum_i x_i\, g p_i. \]

Table 4.6 explains a spreadsheet-like implementation of these formulas. See Example 4.20 and CMM-REF for numerical examples applying this approach.

| Column | Formula |

|---|---|

| A | \(x_i\), sorted in ascending in rows 2 to \(n+1\) |

| B | \(p_i\), check \(p_i\ge 0\) and sum to 1 |

| C | \(F_i=\mathrm{SUM}(B2{:}B\set{n+1})\), cumulative probabilities |

| D | \(=1-Ci\), \(S_i=1-F_i\), exceedance probabilities |

| E | \(E1=0, Ei = D\set{i-1}\), shifts survival down and prepend 1, \(S_0=1, S_{i-1}\) |

| F | \(g p_i=g(E_i)-g(D_i)\), difference to obtain risk adjusted probs |

| G | contribution \(=A_i\times F_i\) |

| G total | \(g[X]=\mathrm{SUM}(G)\). |

Example 4.6 (Pricing Uniform Random Variables.) Let \(U\) be a standard uniform \(U\) on \([0,1]\) with \(S_U(p)=1-p\). This example computes \(g(U)\) across the five representative \(g\).

The TVaR price equals \((1 + p)/2\) by definition or integrating \(g(s)=s / (1-p)\wedge 1\) between \(0\) and \(1\) to get \((1-p)/2 + p=(1+p)/2\) if you prefer.

The CCoC price is \((1-\delta)\mathsf PU + \delta\max U = (1-\delta)/2 + \delta = (1 + \delta)/2\).

For the dual \(g(s)=1-(1-s)^b\) and \[ g(U) = \int_0^1 g(S(s))\,ds = \int_0^1 1-s^b\,ds = b/(b+1). \]

For the PH \(g(s)=s^a\) and the integral equals \(1/(1+a)\),

The Wang case, \(g(s)=\Phi(\Phi^{-1}(s)+\lambda)\) is a little more involved. Let \(Z\) and \(N\) be independent standard normal variables, then \[ \begin{aligned} g(U) &= \int_0^1 \Phi(\Phi^{-1}(s) + \lambda)\,ds \\ &= \int_{-\infty}^\infty \Phi(z+\lambda)\phi(z)\,dz \\ &= \mathsf E[\Phi(Z+\lambda)] \\ &= \int_{-\infty}^\infty \mathsf P(N \le z+\lambda)\phi(z)\,dz \\ &= \int_{-\infty}^\infty \mathsf P(N \le Z+\lambda \mid Z=z)\phi(z )\,dz \\ &= \mathsf P(N \le Z+\lambda) \\ &= \mathsf P(N - Z \le \lambda) \\ &= \Phi(\lambda / \sqrt 2) \end{aligned} \] because \(N-Z\) is normal with mean zero and variance \(2\).

Example 4.7 (Consistent Distortion Parameterizations.) The parameterizations given in Section 4.3.2 are awkward to work with and hard to compare because they have different ranges and are not all monotone with risk aversion. To address these shortcomings, we can use an more consistent parameterization defined by equalizing pricing for a reference random variable. We use the uniform as a reference because it is bounded, which allows full capitalization, and the relevant integrals are easy to compute, see Example 4.6.

For each of the five representative distortions Table 4.7 shows the standard parameter name from Section 4.3.2, and an expression for that parameter in terms of the common \(p\) determined by equating the price expression in the last column with that for the TVaR. For CCoC, \(\delta=p\). For the dual, equating \((1+p)/2\) with \(b/(1+b)\) gives \(b=(1+p)/(1-p)\). It can be helpful to think of the dual in terms of a parameter \(b:=1/a\) with range \([0,1]\) and mean \(1/(1+a)\). Similarly, for the PH \(a=(1-p)/(1+p)\), showing that \(a\) is the reciprocal of the dual parameter \(b\). Finally for the Wang \[ \dfrac{1+p}{2} = \Phi(\lambda / \sqrt 2) \implies \sqrt2\Phi^{-1}\left(\dfrac{1+p}2\right). \]

| Distortion | Parameter | Parameter in \(p\) | Price |

|---|---|---|---|

| TVaR | \(p\) | \(p\) | \(\dfrac{1+p}{2}\) |

| Dual | \(b\) | \(\dfrac{1+p}{1-p}\) | \(\dfrac{b}{1+b}\) |

| Wang | \(\lambda\) | \(\sqrt2\Phi^{-1}\left(\dfrac{1+p}2\right)\) | \(\Phi\left(\dfrac\lambda{\sqrt2}\right)\) |

| PH | \(a\) | \(\dfrac{1-p}{1+p}\) | \(\dfrac1{1+a}\) |

| CCoC | \(\delta\) | \(p\) | \(\dfrac{1+\delta}2\) |

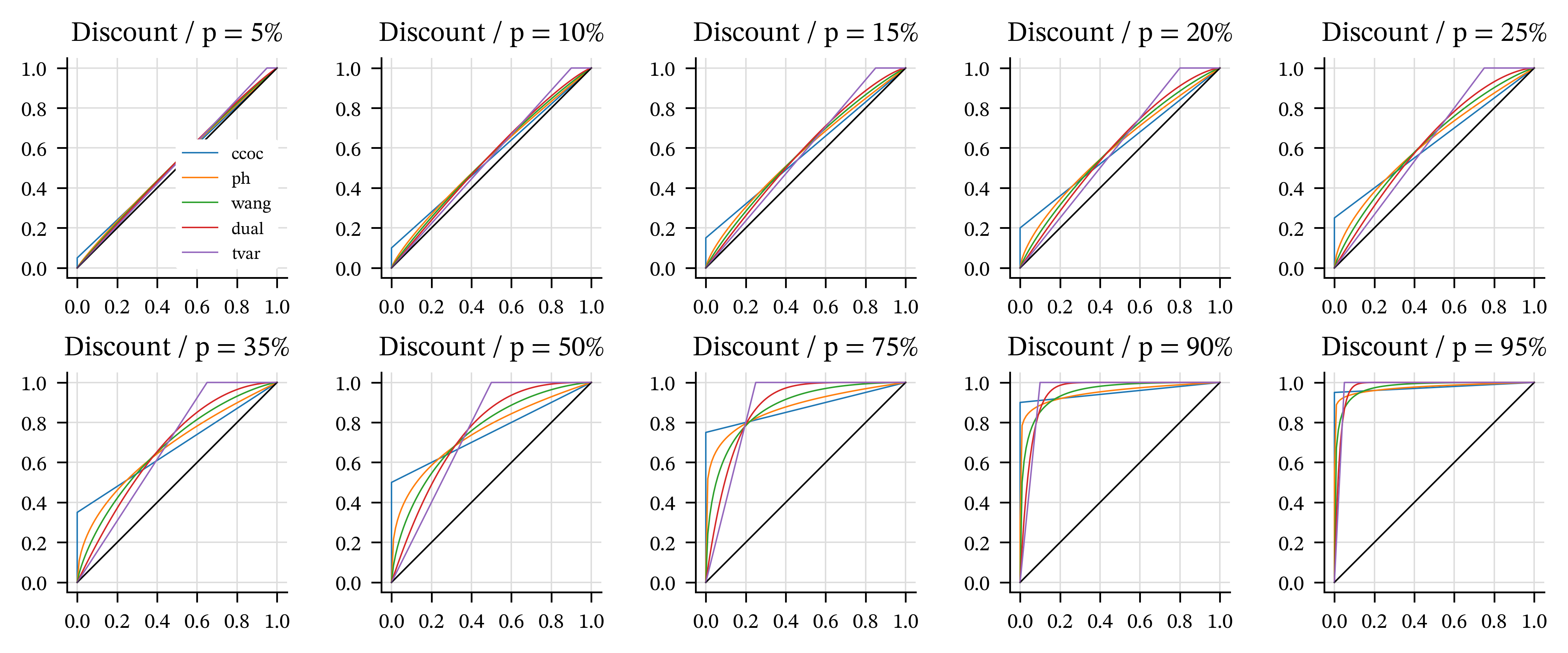

Table 4.7 gives a way to create examples of distortions that are balanced in that each has the same price for the uniform distribution. These are useful in constructing examples. Simply select \(p\) in \([0, 1]\) and use the five distortions with parameters given by the second column (in terms of \(p\)) in Table 4.7.

The ordering TVaR to CCoC corresponds most tail-centric (TVaR is cheapest for tail risk, CCoC most expensive) to tail-phobic (TVaR is most expensive for body risk, CCoC cheapest). In all cases higher \(p\) corresponds to a higher price, \(p=0\) to the mean and \(p=1\) to the maximum.

Table 4.8 shows the parameters for a range of \(p\) values. Remember, \(p\) corresponds to the TVaR \(p\) and to the discount rate for CCoC. Thus, a reasonable pricing range is less than about 25% and a reasonable capital measure range is above 90%. Figure 4.8 shows the corresponding distortion functions. These parameter ranges and correspondences are a handy reference for deciding reasonable test distortions. The graphs illustrate the symmetries discussed in Section 4.3.5. \(\quad\square\)

| p | r | ccoc | ph | wang | dual | tvar |

|---|---|---|---|---|---|---|

| 5.0% | 5.3% | 0.05 | 0.905 | 0.0887 | 1.11 | 0.05 |

| 10.0% | 11.1% | 0.1 | 0.818 | 0.178 | 1.22 | 0.1 |

| 15.0% | 17.6% | 0.15 | 0.739 | 0.267 | 1.35 | 0.15 |

| 20.0% | 25.0% | 0.2 | 0.667 | 0.358 | 1.5 | 0.2 |

| 25.0% | 33.3% | 0.25 | 0.6 | 0.451 | 1.67 | 0.25 |

| 35.0% | 53.8% | 0.35 | 0.481 | 0.642 | 2.08 | 0.35 |

| 50.0% | 100.0% | 0.5 | 0.333 | 0.954 | 3 | 0.5 |

| 75.0% | 300.0% | 0.75 | 0.143 | 1.63 | 7 | 0.75 |

| 90.0% | 900.0% | 0.9 | 0.0526 | 2.33 | 19 | 0.9 |

| 95.0% | 1900.0% | 0.95 | 0.0256 | 2.77 | 39 | 0.95 |

4.4.6 Calibrating Distortions to Market Pricing

It is easy to calibrate a single-parameter family of distortions to achieve a target price on a given risk. Simply use the Newton-Raphson method, or bisection method. The five representative distortions all price monotonically with parameter making numerical methods very reliable. The aggregate.Portfolio class has a built in method to calibrate using this method. The price can be expressed as a target loss ratio or return on equity, and equity levels can be specified directly or by giving a return period probability. See REF-HELP.

4.4.7 The Spectral Representation Theorem II

In this section we present the second of two representation theorems for spectral measures; the first, in Section 4.4.5, draws the connection between a SRM and a distortion function. This one applies more general results from the theory of coherent risk measures to SRMs specifically. It is needed in the next section to calculate and interpret the natural allocation. Throughout, \(X\) denotes a loss (larger is worse) and \((\Omega,\mathcal F, \mathsf P)\) is an atomless probability space.

There are three equivalent ways to view a spectral risk measure (SRM):

- a primal form, as a weighted average of quantiles (the spectrum budget view),

- a dual form, as a worst-case expected value over a set of probability measures \(\mathcal Q\) (the scenario or stressed measure view), and

- a risk adjusted probability form, using the contact (subgradient) function \(Z = d\mathsf Q/d\mathsf P\) to effect the adjustment.

In 3., \(Z\) is chosen to attain the dual bound, and it acts like a tangent.

By REF (b) we know that for positive \(X\), a distortion defines an SRM via \[ g(X) = \int_0^1 q_X(u)\,d\check g(u), \] interpreted as a Stieltjes integral.

When \(g\) is absolutely continuous, write \(d\check g(u)=\phi(u)\,du\) with \(\phi(u)=g'(1-u)\) a.e. Then \[ g(X) = \int_0^1 q_X(u)\,\phi(u)\,du, \] and \(\phi\) is a spectrum: \(\phi\ge 0\), \(\int_0^1\phi(u)\,du=1\), and \(\phi\) is nonincreasing.

The dual representation writes the operator \(g\) as a supremum of expectations under alternative measures.

Theorem 4.3 (Dual representation with explicit densities.) Let \(g\) concave distortion and let \(g(X)\) denote the associated SRM. Then there exists a set of probability measures \(\mathcal Q\) such that \[ g(X) = \sup_{\mathsf Q\in\mathcal Q} \mathsf Q(X) = \sup_{Z\in\mathcal Z} \mathsf P(XZ), \tag{4.12}\] where \(\mathcal Z\) is the set of Radon–Nikodym derivatives \(Z=d\mathsf Q/d\mathsf P\) that satisfy:

- \(Z\ge 0\) and \(\mathsf P(Z)=1\) (so \(\mathsf Q\) is a probability), and

- the spectral budget (majorization) constraint \[ \int_t^1 q_Z(s)\,ds \le g(1-t),\qquad \text{for all } t\in[0,1]. \]

Theorem 4.3 says the dual feasible \(Z\) are exactly those functions whose integrated quantiles sit below the distortion curve. That is, you are allowed to “tilt” probability toward adverse scenarios (large \(X\)), but only up to the distortion budget \(g\). The more concave \(g\) is (the more risk averse), the larger the allowed tail mass of \(Z\).